ContextSDK - Angel Round, Dieter Rappold joining as CEO, first large customers

Over the last few months, a ton has happened with ContextSDK, a new developer tool to optimize apps based on the user’s current context:

Today, apps often have little logic when it comes to timing in-app communications or upsells. “Every day, billions of prompts and popups are shown at suboptimal times, resulting in annoyed users and increased churn.” said Felix Krause, co-founder of ContextSDK. “With today’s computing power, precise smartphone sensor data, combined with the latest machine learning algorithms, we can do much better than that.” Felix Krause aims to build the foundation for the next generation of mobile apps.

Angel Round

ContextSDK announces its first funding round, led by high profile Business Angels such as Peter Steinberger (founder of PSPDFKit), Johannes Moser (founder of Immerok), Michael Schuster (former Partner Speedinvest), Christopher Zemina (founder Friday Finance, GetPliant), Ionut Ciobotaru (former CEO Verve Group), Eric Seufert (Heracles Capital), Moataz Soliman (co-Founder Instabug) and others.

Dieter Rappold joining as CEO

Dieter Rappold has recently joined ContextSDK as co-founder and CEO. With more than 20 years of experience in building and scaling companies, Dieter will be responsible for the company’s growth and operations.

ContextSDK Performance

One recently onboarded customer, as a case study, showed 500 million upselling prompts, resulting in 24 million sales. With ContextSDK they experienced a remarkable +43% increase in conversion rates for new customers.

ContextSDK is an extremely lightweight SDK for iOS apps, using only 0.2% of CPU, less than a MB of memory footprint, and less than a MB added to the app’s binary size. It is fully GDPR compliant, not collecting any PII at any point.

Privacy

Recently passed laws across the world signify a clear trend towards user privacy and data protection, resulting in many previously used services to be deemed unlawful, or only offering limited capabilities.

ContextSDK was built from the ground up with privacy in mind. All processing, including the execution of machine learning models, happen on-device. ContextSDK operates without any type of PII (Personal Identifiable Information), thanks to a completely new and unique mechanism built to fully protect the user’s privacy while also helping app developers achieve their business goals.

New Website

We’ve also just launched our new ContextSDK website, now including more details on how ContextSDK works, and how it can help your business.

Interested in using ContextSDK?

As ContextSDK is a brand-new product, we carefully select the companies we want to work with. We’ve been seeing the best performance improvements for apps with a minimum of 20,000 monthly active users, as that’s where our machine learning approach really shines. If you believe your app would be a good fit, sign up for ContextSDK here.

We’re hiring

We’re hiring a Data Scientist, check out our careers page.

Full Press Release

Read the full press release on contextsdk.com

Tags: ios, context, sdk, swift, upsell, in-app | Edit on GitHub

ContextSDK - Introducing the most intelligent way to know how and when to monetize your user

Today, whether your app is opened when your user is taking the bus to work, in bed about to go to sleep, or when out for drinks with friends, your product experience is the same. However, apps of the future will perfectly fit into the context of their users’ environment.

As app usage has exploded over the past decade, personalization and user context are becoming increasingly important to grow and retain your userbase. ContextSDK enables you to create intelligent products that adapt to users’ preferences and needs, all while preserving the user’s privacy and battery life using only on-device processing.

ContextSDK leverages machine learning to make optimized suggestions when to upsell an in-app purchase, what type of ad and dynamic copy to display, or predict what a user is about to do in your app, and dynamically change the product flows to best fit their current situation.

Commute on the train

Alone and bored at night

In a loud bar with friends

Your users have different needs based on the context of what they are doing and where they are. Shouldn’t your app be more personalized to better serve them?

ContextSDK takes hundreds of signals and builds a highly accurate and complex model, to correlate what a user is doing and the impact it has on in-app conversion events.

ContextSDK performance

Meta has published data on how “less is more” when it comes to notifications and user prompts: Even though in the short-term, just showing something on every possible occasion will increase your chances of the user engaging, in the long-run, you are better off showing fewer prompts, only when the user is most likely to convert.

Context matters! Large tech companies are already using those techniques to optimise their apps, and now is your chance to benefit from it as well. Sign up to get started.

Tags: ios, context, sdk, swift, upsell, in-app | Edit on GitHub

iOS Privacy: Announcing InAppBrowser.com - see what JavaScript commands get injected through an in-app browser

Please note that this article is outdated (August 2022). Importantly, the article does not claim that any data logging or transmission is actively occurring. Instead, it highlights the potential technical capabilities of in-app browsers to inject JavaScript code, which could theoretically be used to monitor user interactions.

Last week I published a report on the risks of mobile apps using in-app browsers. Some apps, like Instagram and Facebook, inject JavaScript code into third party websites that cause potential security and privacy risks to the user.

I was so happy to see the article featured by major media outlets across the globe, like TheGuardian and The Register, generated a over a million impressions on Twitter, and was ranked #1 on HackerNews for more than 12 hours. After reading through the replies and DMs, I saw a common question across the community:

TikTok's In-App Browser injecting code to observe all taps and keyboard inputs, which can include passwords and credit cards

“How can I verify what apps do in their webviews?”

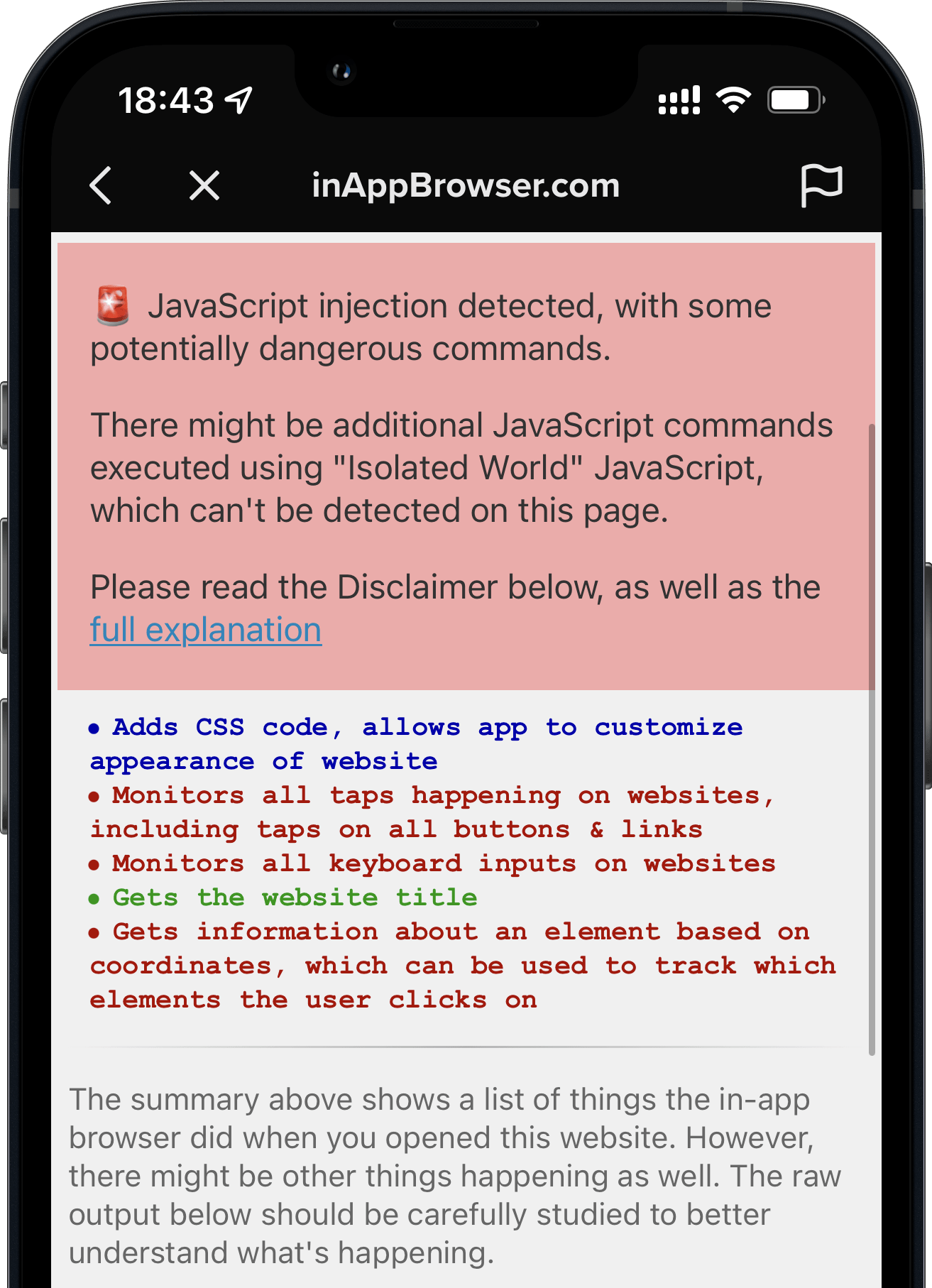

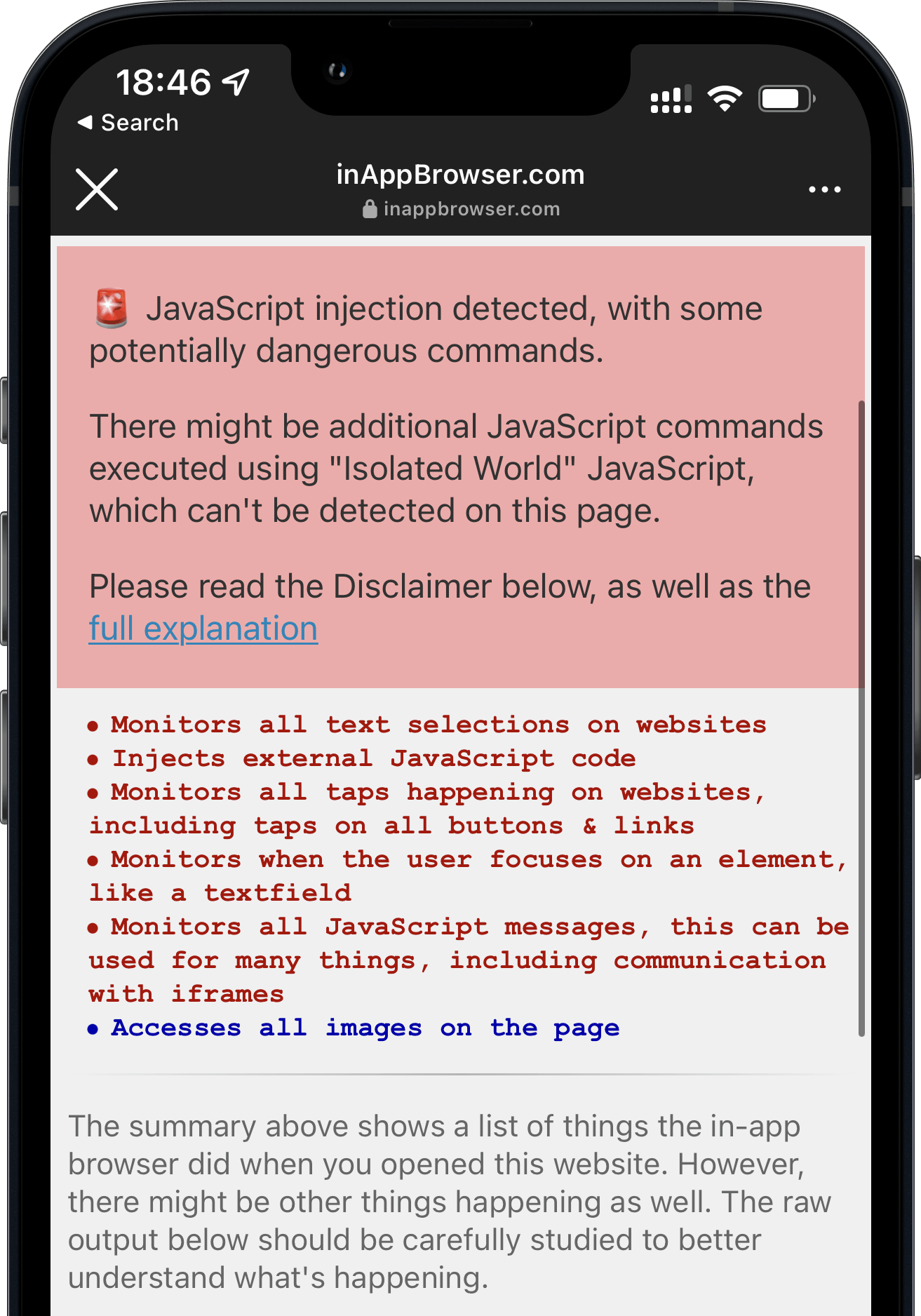

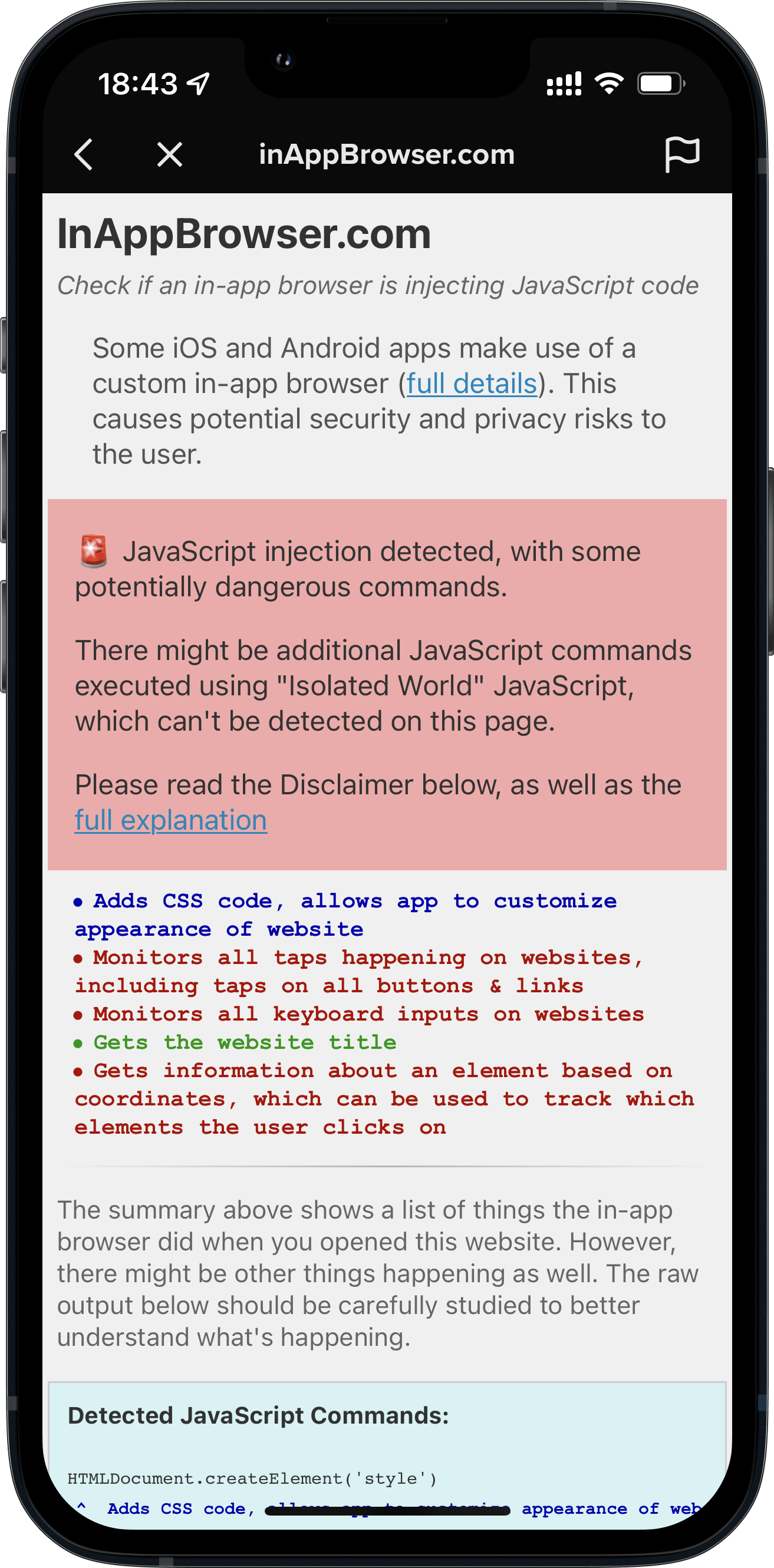

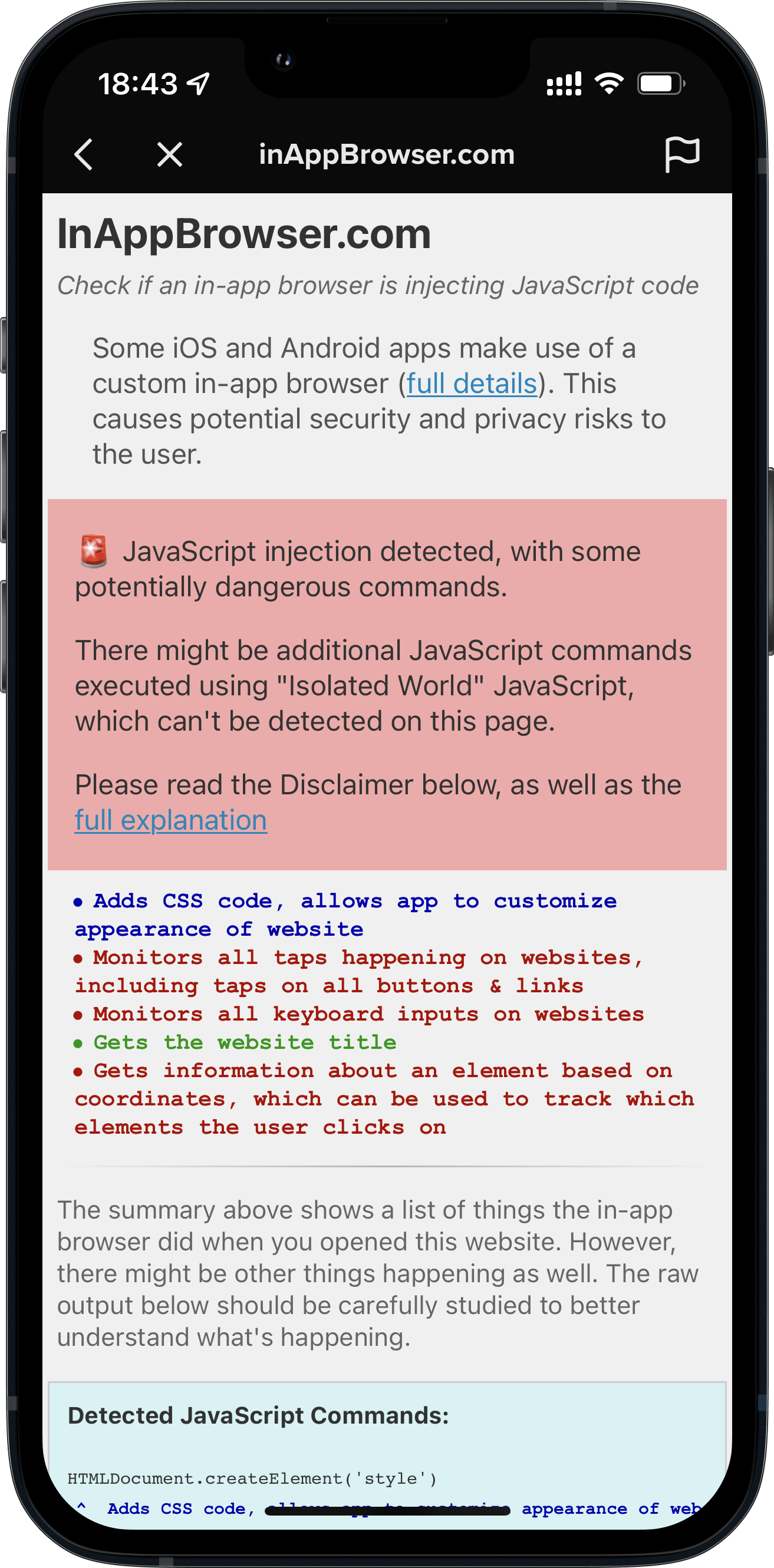

Introducing InAppBrowser.com, a simple tool to list the JavaScript commands executed by the iOS app rendering the page.

To try this this tool yourself:

- Open an app you want to analyze

- Share the url https://InAppBrowser.com somewhere inside the app (e.g. send a DM to a friend, or post to your feed)

- Tap on the link inside the app to open it

- Read the report on the screen

TikTok's In-App Browser injecting code to observe all taps and keyboard inputs, which can include passwords and credit cards

I started using this tool to analyze the most popular iOS apps that have their own in-app browser. Below are the results I’ve found.

For this analysis I have excluded all third party iOS browsers (Chrome, Brave, etc.), as they use JavaScript to offer some of their functionality, like a password manager. Apple requires all third party iOS browsers apps to use the Safari rendering engine WebKit.

Important Note: This tool can’t detect all JavaScript commands executed, as well as doesn’t show any tracking the app might do using native code (like custom gesture recognisers). More details on this below.

Fully Open Source

InAppBrowser.com is designed for everybody to verify for themselves what apps are doing inside their in-app browsers. I have decided to open source the code used for this analysis, you can check it out on GitHub. This allows the community to update and improve this script over time.

iOS Apps that have their own In-App Browser

- Option to open in default browser: Does the app provide a button to open the currently shown link in the default browser?

- Modify page: Does the app inject JavaScript code into third party websites to modify its content? This includes adding tracking code (like inputs, text selections, taps, etc.), injecting external JavaScript files, as well as creating new HTML elements.

- Fetch metadata: Does the app run JavaScript code to fetch website metadata? This is a harmless thing to do, and doesn’t cause any real security or privacy risks.

- JS: A link to the JavaScript code that I was able to detect. Disclaimer: There might be other code executed. The code might not be a 100% accurate representation of all JS commands.

| App | Option to open in default browser | Modify page | Fetch metadata | JS | Updated |

|---|---|---|---|---|---|

| TikTok | ⛔️ | Yes | Yes | .js | 2022-08-18 |

| ✅ | Yes | Yes | .js | 2022-08-18 | |

| FB Messenger | ✅ | Yes | Yes | .js | 2022-08-18 |

| ✅ | Yes | Yes | .js | 2022-08-18 | |

| Amazon | ✅ | None | Yes | .js | 2022-08-18 |

| Snapchat | ✅ | None | None | 2022-08-18 | |

| Robinhood | ✅ | None | None | 2022-08-18 |

Click on the Yes or None on the above table to see a screenshot of the app.

Important: Just because an app injects JavaScript into external websites, doesn’t mean the app is doing anything malicious. There is no way for us to know the full details on what kind of data each in-app browser collects, or how or if the data is being transferred or used. This publication is stating the JavaScript commands that get executed by each app, as well as describing what effect each of those commands might have. For more background on the risks of in-app browsers, check out last week’s publication.

Even if some of the apps above have green checkmarks, they might use the new WKContentWorld isolated JavaScript, which I’ll describe below.

TikTok

Please note that this article is outdated (August 2022). Importantly, the article does not claim that any data logging or transmission is actively occurring. Instead, it highlights the potential technical capabilities of in-app browsers to inject JavaScript code, which could theoretically be used to monitor user interactions.

When you open any link on the TikTok iOS app, it’s opened inside their in-app browser. While you are interacting with the website, TikTok subscribes to all keyboard inputs (including passwords, credit card information, etc.) and every tap on the screen, like which buttons and links you click.

- TikTok iOS subscribes to every keystroke (text inputs) happening on third party websites rendered inside the TikTok app. This can include passwords, credit card information and other sensitive user data. (

keypressandkeydown). We can’t know what TikTok uses the subscription for, but from a technical perspective, this is the equivalent of installing a keylogger on third party websites. - TikTok iOS subscribes to every tap on any button, link, image or other component on websites rendered inside the TikTok app.

- TikTok iOS uses a JavaScript function to get details about the element the user clicked on, like an image (

document.elementFromPoint)

Here is a list of all JavaScript commands I was able to detect.

Update: TikTok’s statement, as reported per Forbes.com:

The company confirmed those features exist in the code, but said TikTok is not using them.

“Like other platforms, we use an in-app browser to provide an optimal user experience, but the Javascript code in question is used only for debugging, troubleshooting and performance monitoring of that experience — like checking how quickly a page loads or whether it crashes,” spokesperson Maureen Shanahan said in a statement.

The above statement confirms my findings. TikTok injects code into third party websites through their in-app browsers that behaves like a keylogger. However claims it’s not being used.

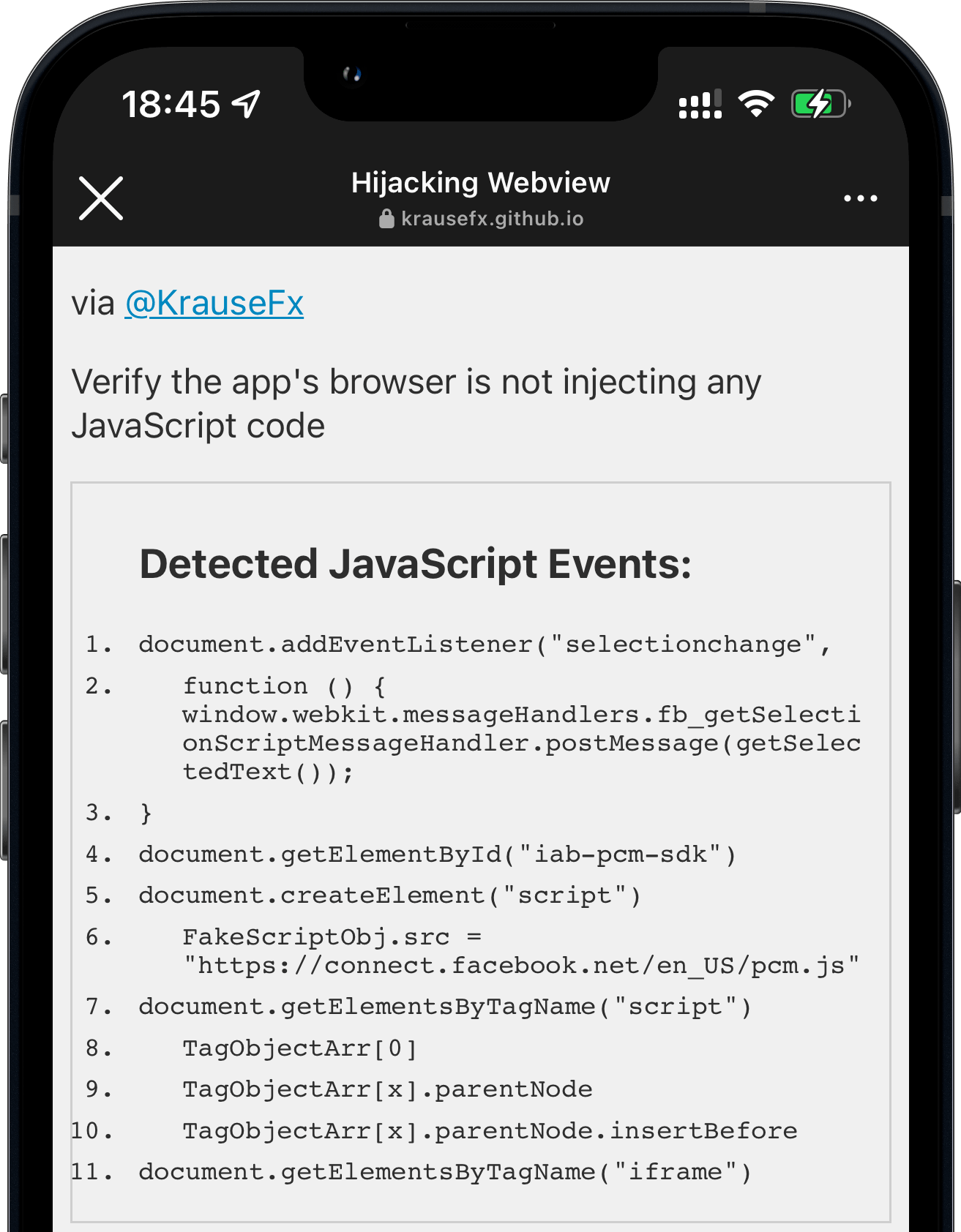

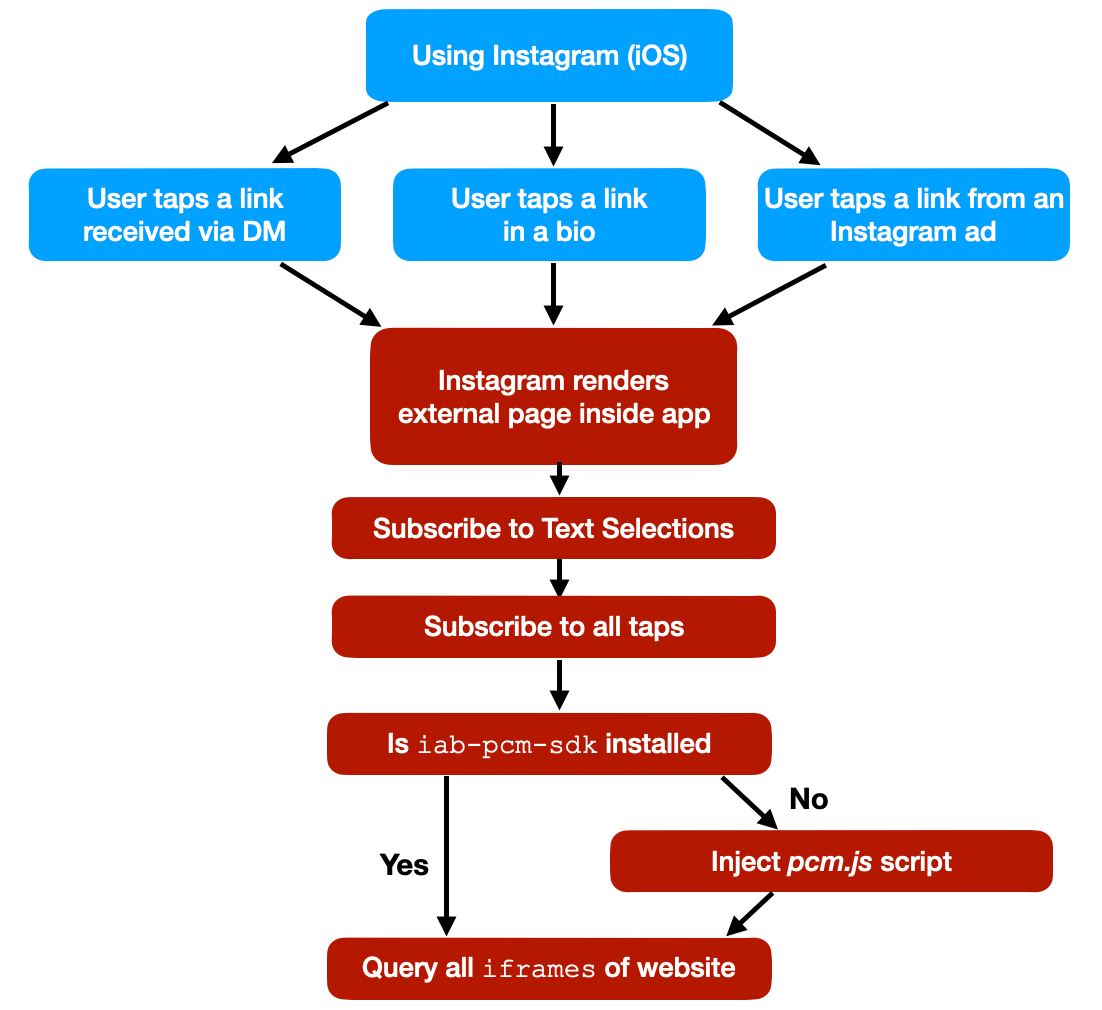

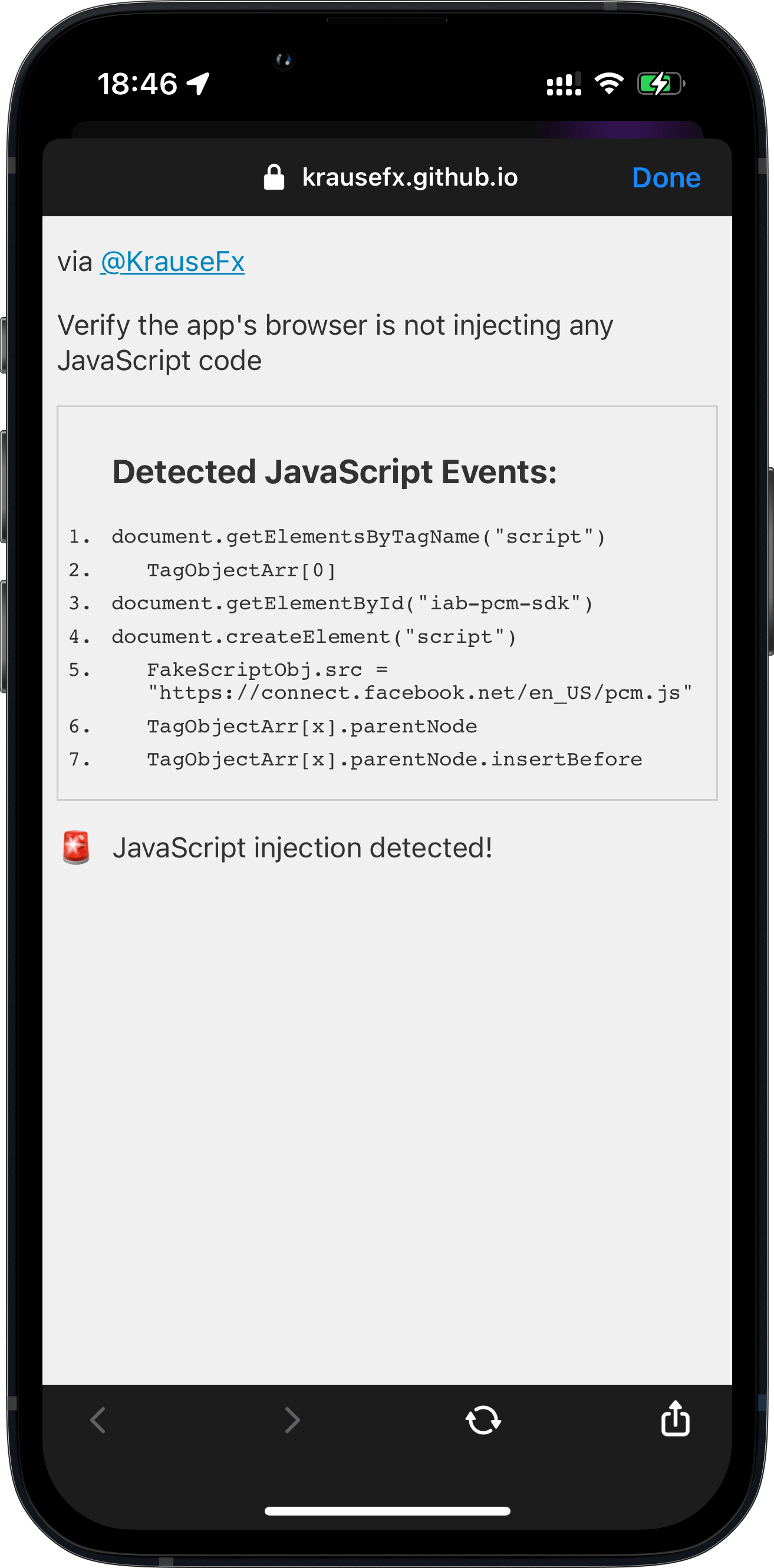

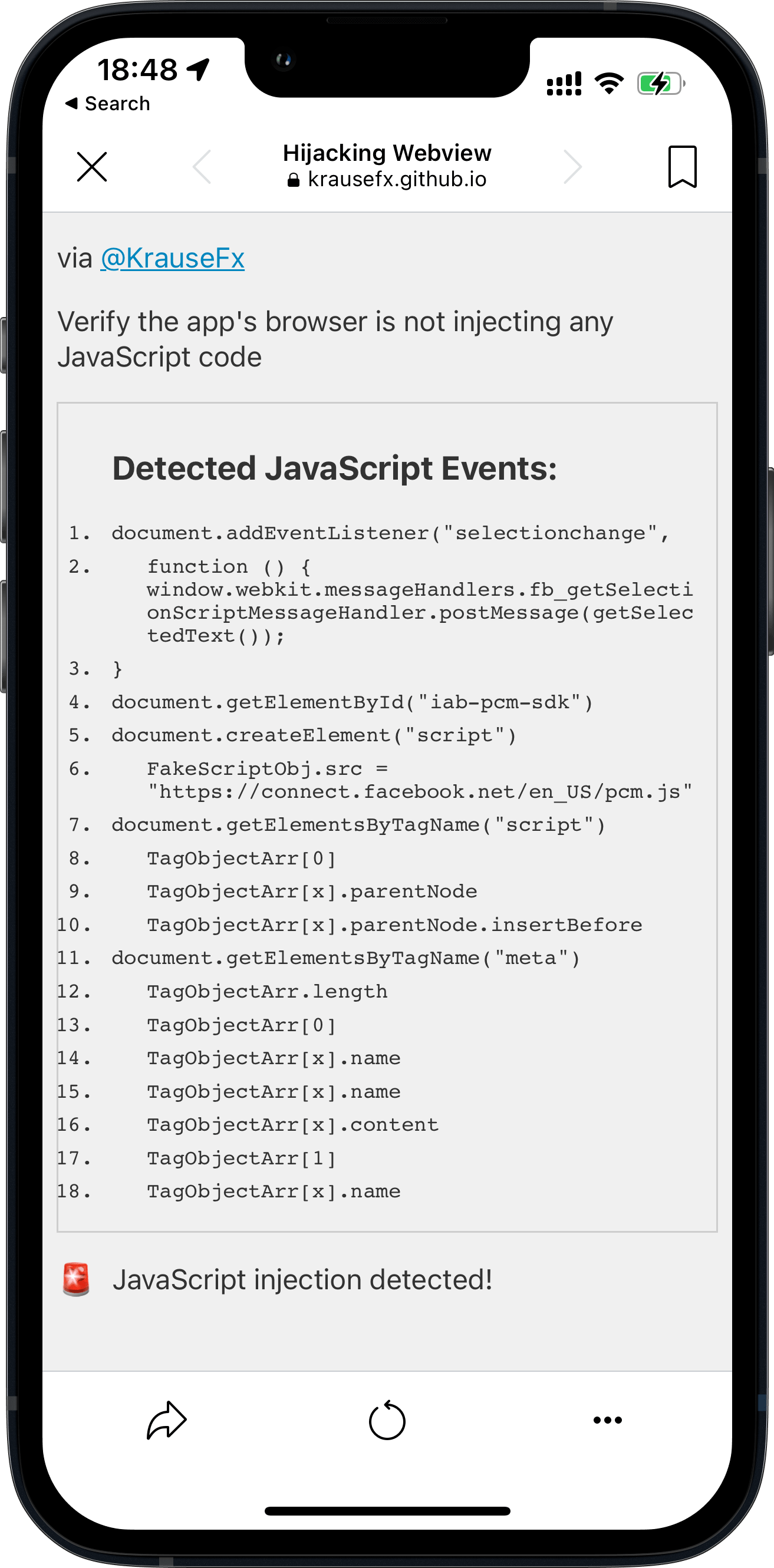

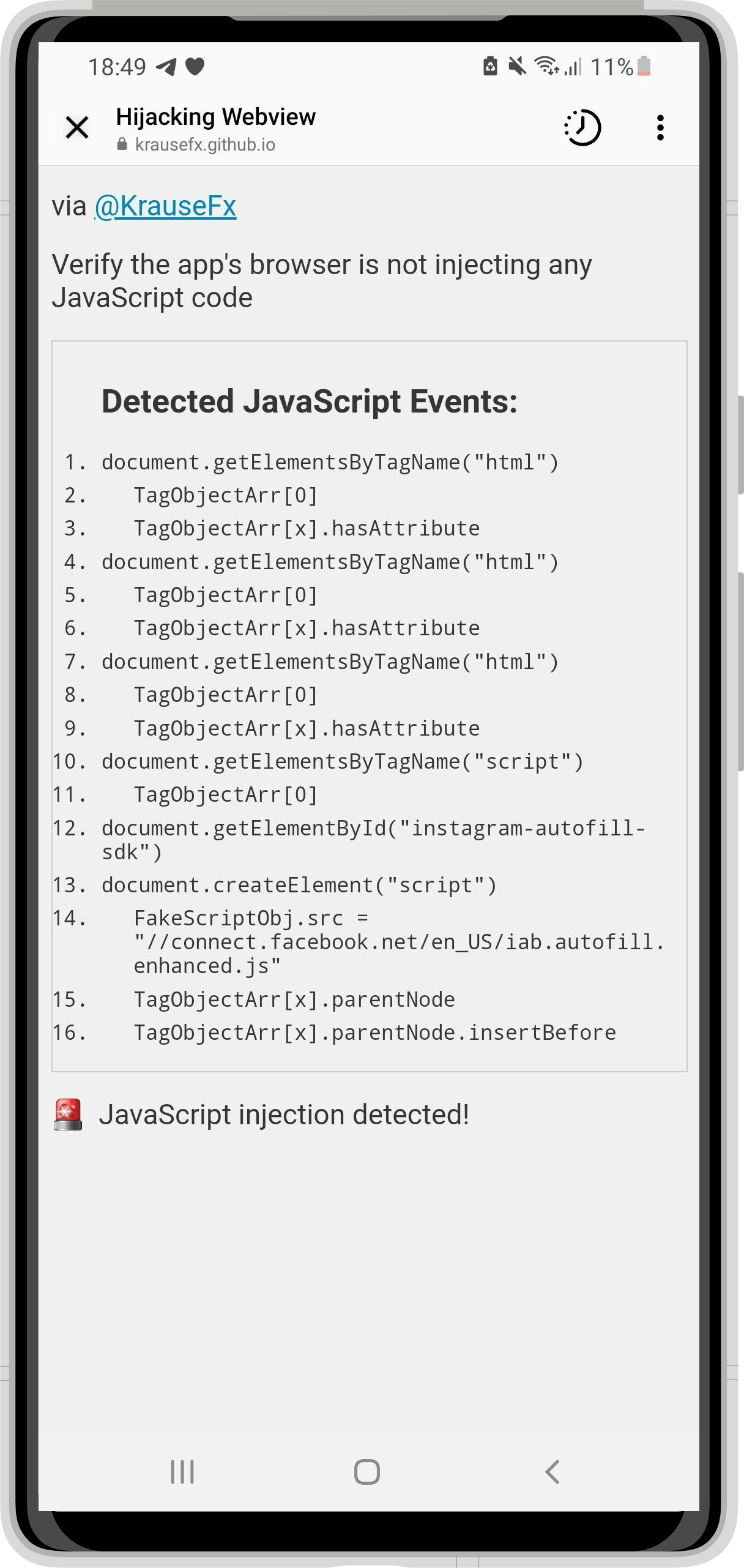

Instagram does more than just inserting pcm.js

Last week’s post talked about how Meta injects the pcm.js script onto third party websites. Meta claimed they only inject the script to respect the user’s ATT choice, and additional “security and user features”.

The code in question allows us to respect people’s privacy choices by helping aggregate events (such as making a purchase online) from pixels already on websites, before those events are used for advertising or measurement purposes.

After improving the JavaScript detection, I now found some additional commands Instagram executes:

- Instagram iOS subscribes to every tap on any button, link, image or other component on external websites rendered inside the Instagram app.

- Instagram iOS subscribes to every time the user selects a UI element (like a text field) on third party websites rendered inside the Instagram app.

Here is a list of all JavaScript commands I was able to detect.

Note on subscribing: When I talk about “App subscribes to”, I mean that the app subscribes to the JavaScript events of that type (e.g. all taps). There is no way to verify what happens with the data.

Apps can hide their JavaScript activities from this tool

Since iOS 14.3 (December 2020), Apple introduced the support of running JavaScript code in the context of a specified frame and content world. JavaScript commands executed using this approach can still fully access the third party website, but can’t be detected by the website itself (in this case a tool like InAppBrowser.com).

Use a WKContentWorld object as a namespace to separate your app’s web environment from the environment of individual webpages or scripts you execute. Content worlds help prevent issues that occur when two scripts modify environment variables in conflicting ways. […] Changes you make to the DOM are visible to all script code, regardless of content world.

This new system was initially built so that website operators can’t interfere with JavaScript code of browser plugins, and to make fingerprinting more difficult. As a user, you can check the source code of any browser plugin, as you are in control over the browser itself. However with in-app browsers we don’t have a reliable way to verify all the code that is executed.

So when Meta or TikTok want to hide the JavaScript commands they execute on third party websites, all they’d need to do is to update their JavaScript runner:

// Currently used code by Meta & TikTok

self.evaluateJavaScript(javascript)

// Updated to use the new system

self.evaluateJavaScript(javascript, in: nil, in: .defaultClient, completionHandler: { _ in })

For example, Firefox for iOS already uses the new WKContentWorld system. Due to the open source nature of Firefox and Google Chrome for iOS it’s easy for us as a community to verify nothing suspicious is happening.

Especially after the publicity of last week’s post, as well as this one, tech companies that still use custom in-app browsers will very quickly update to use the new WKContentWorld isolated JavaScript system, so their code becomes undetectable to us.

Hence, it becomes more important than ever to find a solution to end the use of custom in-app browsers for showing third party content.

Valid use-cases for in-app webviews

There are many valid reasons to use an in-app browser, particularly when an app accesses its own websites to complete specific transactions. For example, an airline app might not have the seat selection implemented natively for their whole airplane fleet. Instead they might choose to reuse the web-interface they already have. If they weren’t able to inject cookies or JavaScript commands inside their webview, the user would have to re-login while using the app, just so they can select their seat. Shoutout to Venmo, which uses their own in-app browser for all their internal websites (e.g. Terms of Service), but as soon as you tap on an external link, they automatically transition over to SFSafariViewController.

However, there are data privacy & integrity issues when you use in-app browsers to visit non-first party websites, such as how Instagram and TikTok show all external websites inside their app. More importantly, those apps rarely offer an option to use a standard browser as default, instead of the in-app browser. And in some cases (like TikTok), there is no button to open the currently shown page in the default browser.

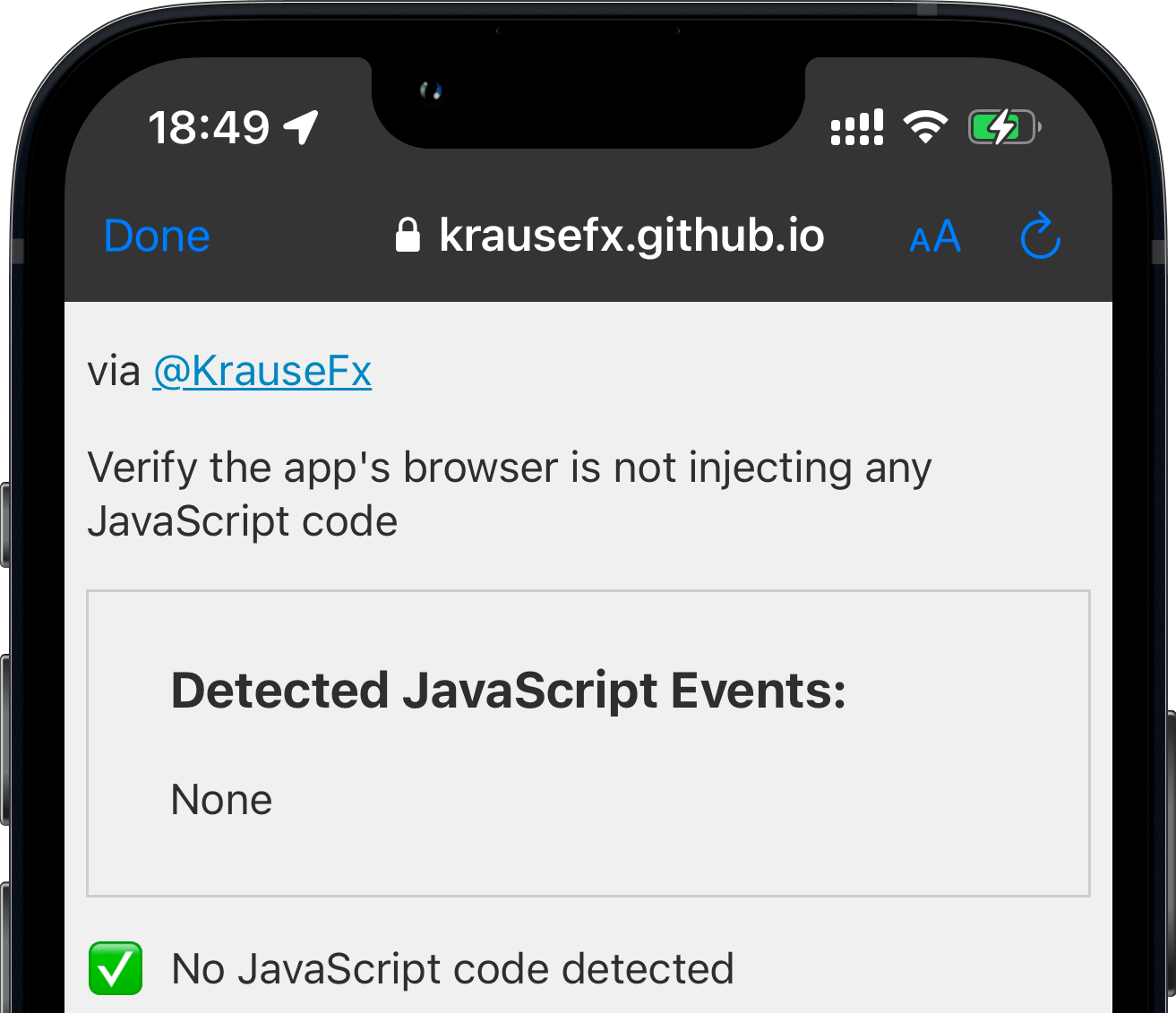

iOS Apps that use Safari

The apps below follow Apple’s recommendation of using Safari or SFSafariViewController for viewing external websites. More context on SFSafariViewController in the original article.

All apps that use SFSafariViewController or Default Browser are on the safe side, and there is no way for apps to inject any code onto websites, even with the new WKContentWorld system.

| App | Technology | Updated |

|---|---|---|

| SFSafariViewController | 2022-08-15 | |

| SFSafariViewController | 2022-08-15 | |

| Default Browser | 2022-08-15 | |

| Slack | Default Browser | 2022-08-16 |

| Google Maps | SFSafariViewController | 2022-08-15 |

| YouTube | Default Browser | 2022-08-15 |

| Gmail | Default Browser | 2022-08-15 |

| Telegram | SFSafariViewController | 2022-08-15 |

| Signal | SFSafariViewController | 2022-08-15 |

| Tweetbot | SFSafariViewController | 2022-08-15 |

| Spotify | Default Browser | 2022-08-15 |

| Venmo | SFSafariViewController | 2022-08-15 |

| Microsoft Teams | Default Browser | 2022-08-16 |

| Microsoft Outlook | Default Browser or Edge | 2022-08-16 |

| Microsoft OneNote | Default Browser | 2022-08-16 |

| Twitch | Default Browser | 2022-08-16 |

What can we do?

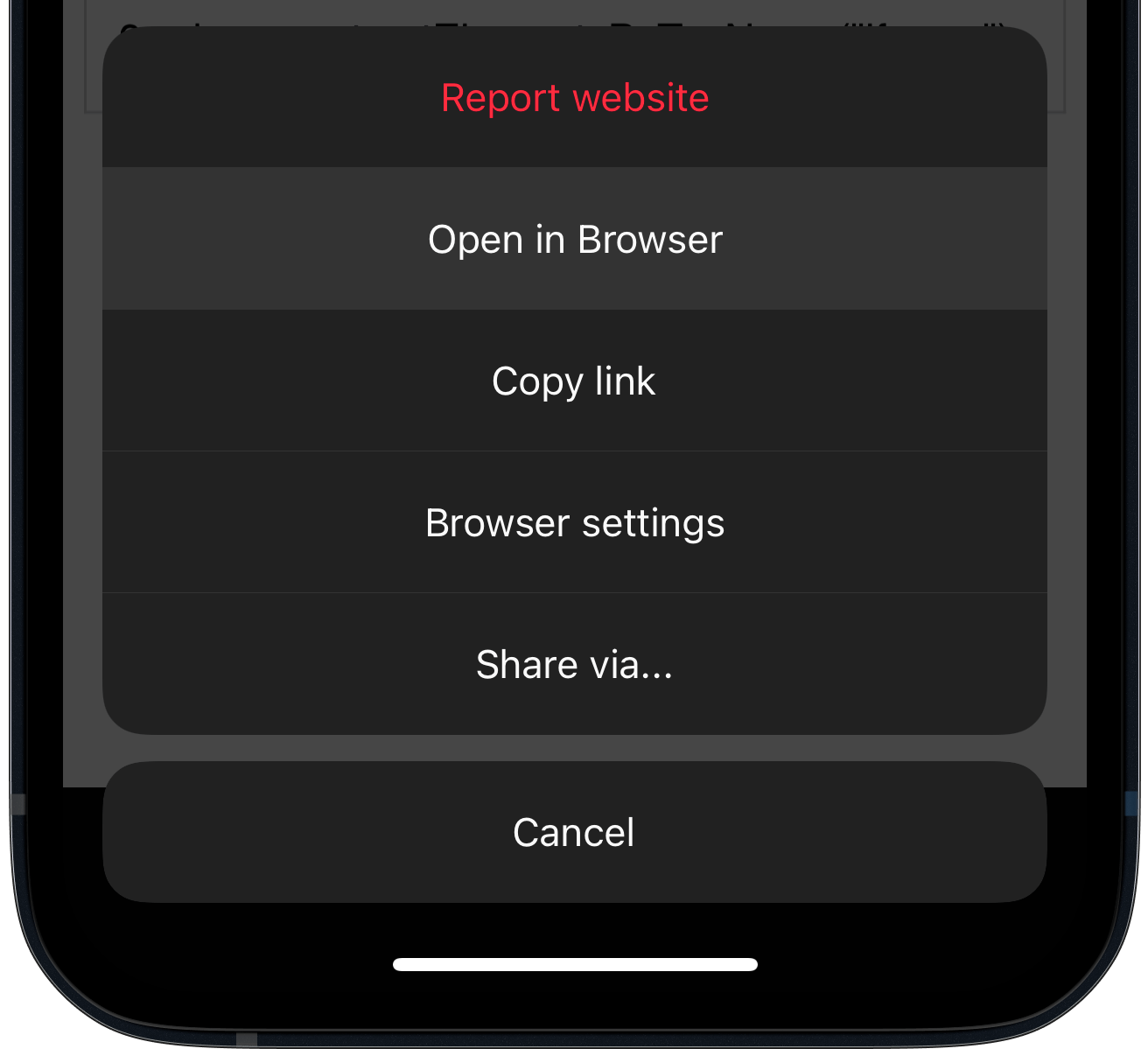

As a user of an app

Most in-app browsers have a way to open the currently shown website in Safari. As soon as you land inside an in-app browser, use the Open in Browser feature to switch to a safer browser. If that button isn’t available, you will have to copy & paste the URL to open the link in the browser of your choice. If the app makes it difficult to even do that, you can tap & hold a link on the website and then use the Copy feature, which can be a little tricky to get right.

TikTok doesn’t have a button to open websites in the default browser.

Update: According to some tweets, sometimes there is a way to open websites in the default browser.

Companies using in-app browsers

If you’re at a company where you have an in-app browser, use it only for your own pages and open all external links in the user’s default browser. Additionally, provide a setting to let users choose a default browser over an in-app browser experience. Unfortunately, these types of changes rarely get prioritized over features that move metrics inside of tech organizations. However, it’s so important for people to educate others on their team, and their managers, about the positive impact of making better security and privacy decisions for the user. These changes can be transparently marketed to users as an opportunity to build further trust.

Major tech companies

It’s important to call out how much movement there’s been in the privacy of data space, but it’s unclear how many of these changes have been motion vs. true progress for the industry and the user.

“Many tech companies take heat for ‘abusing their users’ privacy’, when in fact they try to balance out business priorities, great user experiences, and ensuring they are respecting privacy and user data. It’s clear why companies were motivated to provide an in-app experience for external websites in the first place.

With the latest technology, companies can start to provide a smooth experience for the user, while respecting their privacy. It’s possible for iOS or Android developers to move the privacy standards and responsibility to Apple & Google (e.g. stricter app reviews, more permission screens, etc.), however this is a much larger conversation where companies need to work together to define what standards should exist. We can’t have one or two companies set the direction for the entire industry, since a solution needs to work for the large majority of companies. Otherwise, we’re left in a world where companies are forced to get creative on finding ways to track additional user data from any source possible, or define their own standards of what’s best for user privacy, ultimately hurting the consumer and the product experience.”

Technology-wise App-Bound Domains seems to be an excellent new WebKit feature making it possible for developers to offer a safer in-app browsing experience when using WKWebView. As an app developer, you can define which domains your app can access (your own), and you won’t be able to control third party pages any more. To disable the protection, a user would have to explicitly disable it in the iOS settings app. However, at the time of writing, this system is not yet enabled by default.

FAQs for non-tech readers

- Can in-app browsers read everything I do online? Yes, if you are browsing through their in-app browser they technically can.

- Do the apps above actually steal my passwords, address and credit card numbers? No! I wanted to showcase that bad actors could get access to this data with this approach. As shown in the past, if it’s possible for a company to get access to data legally and for free, without asking the user for permission, they will track it.

- How can I protect myself? Whenever you open a link from any app, see if the app offers a way to open the currently shown website in your default browser. During this analysis, every app besides TikTok offered a way to do this.

- Are companies doing this on purpose? Building your own in-app browser takes a non-trivial time to program and maintain, significantly more than just using the privacy and user-friendly alternative that’s already been built into the iPhone for the past 7 years. Most likely there is some motivation there for the company to track your activities on those websites.

- I opened InAppBrowser.com inside an app, and it doesn’t show any commands. Am I safe? No! First of all, the website only checks for one of many hundreds of attack vectors: JavaScript injection from the app itself. And even for those, as of December 2020, app developers can completely hide the JavaScript commands they execute, therefore there is no way for us to verify what is actually happening under the hood.

Please note that this article is outdated (August 2022). Importantly, the article does not claim that any data logging or transmission is actively occurring. Instead, it highlights the potential technical capabilities of in-app browsers to inject JavaScript code, which could theoretically be used to monitor user interactions.

Tags: ios, privacy, hijacking, sniffing, apps, browser, tiktok, keylogger | Edit on GitHub

iOS Privacy: Instagram and Facebook can track anything you do on any website in their in-app browser

Update: A week later, I’ve published a new post, looking into other apps including TikTok, where I also found an additional JavaScript event listener of Instagram which can monitor all taps on third party websites.

The iOS Instagram and Facebook app render all third party links and ads within their app using a custom in-app browser. This causes various risks for the user, with the host app being able to track every single interaction with external websites, from all form inputs like passwords and addresses, to every single tap.

What does Instagram do?

- Links to external websites are rendered inside the Instagram app, instead of using the built-in Safari.

- This allows Instagram to monitor everything happening on external websites, without the consent from the user, nor the website provider.

- The Instagram app injects their JavaScript code into every website shown, including when clicking on ads. Even though the injected script doesn’t currently do this, running custom scripts on third party websites allows them to monitor all user interactions, like every button & link tapped, text selections, screenshots, as well as any form inputs, like passwords, addresses and credit card numbers.

Why is this a big deal?

- Apple actively works against cross-host tracking:

- As of iOS 14.5 App Tracking Transparency puts the user in control: Apps need to get the user’s permission before tracking their data across apps owned by other companies.

- Safari already blocks third party cookies by default

- Google Chrome is soon phasing out third party cookies

- Firefox just announced Total Cookie Protection by default to prevent any cross-page tracking

- Some ISPs used to inject their own tracking/ad code into all websites, however they could only do it for unencrypted pages. With the rise of HTTPs by default, this isn’t an option any more. The approach the Instagram & Facebook app uses here works for any website, no matter if it’s encrypted or not.

After the App Tracking Transparency was introduced, Meta announced:

Apple’s simple iPhone alert is costing Facebook $10 billion a year

Facebook complained that Apple’s App Tracking Transparency favors companies like Google because App Tracking Transparency “carves out browsers from the tracking prompts Apple requires for apps.”

Websites you visit on iOS don’t trigger tracking prompts because the anti-tracking features are built in.

With 1 Billion active Instagram users, the amount of data Instagram can collect by injecting the tracking code into every third party website opened from the Instagram & Facebook app is a staggering amount.

With web browsers and iOS adding more and more privacy controls into the user’s hands, it becomes clear why Instagram is interested in monitoring all web traffic of external websites.

Facebook bombarded its users with messages begging them to turn tracking back on. It threatened an antitrust suit against Apple. It got small businesses to defend user-tracking, claiming that when a giant corporation spies on billions of people, that’s a form of small business development.

– EFF - Facebook Says Apple is Too Powerful. They’re Right.

Note added on 2022-08-11: Meta is following the ATT (App Tracking Transparency) rules (as added as a note at the bottom of the article). I explained the above to provide some context on why getting data from third party websites/apps is a big deal. The message of this article is about how the iOS Instagram app actively injects and executes JavaScript code on third party websites, using their in-app browser. This article does not talk about the legal aspect of things, but the technical implementation of what is happening, and what is possible on a technical level.

FAQs for non-tech readers

- Can Instagram/Facebook read everything I do online? No! Instagram is only able to read and watch your online activities when you open a link or ad from within their apps.

- Does Facebook actually steal my passwords, address and credit card numbers? No! I didn’t prove the exact data Instagram is tracking, but wanted to showcase the kind of data they could get without you knowing. As shown in the past, if it’s possible for a company to get access to data legally and for free, without asking the user for permission, they will track it.

- How can I protect myself? For full details scroll down to the end of the article. Summary: Whenever you open a link from Instagram (or Facebook or Messenger), make sure to click the dots in the corner to open the page in Safari instead.

- Is Instagram doing this on purpose? I can’t say how the decisions were made internally. All I can say is that building your own in-app browser takes a non-trivial time to program and maintain, significantly more than just using the privacy and user-friendly alternative that’s already been built into the iPhone for the past 7 years.

What gets injected?

The external JavaScript file the Instagram app injects is the (connect.facebook.net/en_US/pcm.js) which is code to build a bridge to communicate with the host app. According to Meta’s info provided to me in response to this publication, it helps aggregate events, i.e. online purchase, before those events are used for targeted advertising and measurement for the Facebook platform.

Disclaimer

I don’t have a list of precise data Instagram sends back home. I do have proof that the Instagram and Facebook app actively run JavaScript commands to inject an additional JavaScript SDK without the user’s consent, as well as tracking the user’s text selections. If Instagram is doing this already, they could also inject any other JavaScript code. The Instagram app itself is well protected against human-in-the-middle attacks, and only by modifying the Android binary to remove certificate pinning and running it in a simulator.

Overall the goal of this project wasn’t to get a list of data that is sent back, but to highlight the privacy & security issues that are caused by the use of in-app browsers, as well as to prove that apps like Instagram are already exploiting this loophole.

To summarize the risks and disadvantages of having in-app browsers:

- Privacy & Analytics: The host app can track literally everything happening on the website, every tap, input, scrolling behavior, which content gets copy & pasted, as well as data shown like online purchases

- Stealing of user credentials, physical addresses, API keys, etc.

- Ads & Referrals: The host app can inject advertisements into the website, or replace the ads API key to steal revenue from the host app, or replace all URLs to include your referral code (this happened before)

- Security: Browsers spent years optimizing the security UX of the web, like showing the HTTPs encryption status, warning the user about sketchy or unencrypted websites, and more

- Injecting additional JavaScript code onto a third party website can cause issues and glitches, potentially breaking the website

- The user’s browser extensions & content blockers aren’t available

- Deep linking doesn’t work well in most cases

- Often no easy way to share a link via other platforms (e.g. via Email, AirDrop, etc.)

Instagram’s in-app browser supports auto-fill of your address and payment information. However there is no legit reason for this to exist in the first place, with all of this already built into the operating system, or the web browser itself.

Testing various Meta’s apps

| Instagram iOS | Messenger iOS |

|---|---|

![An iPhone screenshot, showing a website, rendering what commands got executed by the Instagram app in their in-app browser:

Detected JavaScript Events:

1.

document. addEventListener ('selectionchange'

2.

function ()

{

window.webkit.messageHandlers.fb getSelecti

onScriptMessageHandler.postMessage(getSelec

tedText)):

3. }

4.

document. getElementById('iab-pcm-sdk')

5.

document.createElement ('script')

6.

FakeScriptobj.src

'https://connect.facebook.net/en US/pcm.is'

7. document. getElementsByTagName ('script')

8.

TagObjectArr[0]

9.

TagObjectArr[x].parentNode

10.

TagobjectArr[x].parentNode.insertBefore

11.

document.getElementsByTagName('iframe')](https://krausefx.com/assets/posts/injecting-code/instagram_framed.png) |

|

| Facebook iOS | Instagram Android |

|---|---|

|

|

WhatsApp is opening iOS Safari by default, therefore no issues.

How it works

To my knowledge, there is no good way to monitor all JavaScript commands that get executed by the host iOS app (would love to hear if there is a better way).

I created a new, plain HTML file, with some JS code to override some of the document. methods:

document.getElementById = function(a, b) {

appendCommand('document.getElementById("' + a + '")')

return originalGetElementById.apply(this, arguments);

}

Opening that HTML file from the iOS Instagram app yielded the following:

Comparing this to what happens when using a normal browser, or in this case, Telegram, which uses the recommended SFSafariViewController:

As you can see, a regular browser, or SFSafariViewController doesn’t run any JS code. SFSafariViewController is a great way for app developers to show third party web content to the user, without them leaving your app, while still preserving the privacy and comfort for the user.

Technical Details

- Instagram adds a new event listener, to get details about every time the user selects any text on the website. This, in combination with listening to screenshots, gives Instagram full insight over what specific piece of information was selected & shared

- The Instagram app checks if there is an element with the ID

iab-pcm-sdk: According to this tweet, theiablikely refers to “In App Browser”. - If no element with the ID

iab-pcm-sdkwas found, Instagram creates a newscriptelement, sets its source tohttps://connect.facebook.net/en_US/pcm.js - It then finds the first

scriptelement on your website to insert the pcm JavaScript file right before - Instagram also queries for

iframeson your website, however I couldn’t find any indication of what they’re doing with it

Update: A week later, I’ve published a new post, looking into other apps including TikTok, where I also found an additional JavaScript event listener of Instagram, in particular:

- Instagram iOS subscribes to every tap on any button, link, image or other component on external websites rendered inside the Instagram app.

- Instagram iOS subscribes to every time the user selects a UI element (like a text field) on third

How to protect yourself as a user?

Escape the in-app-webview

Most in-app browsers have a way to open the currently rendered website in Safari. As soon as you land on that screen, just use that option to escape it. If that button isn’t available, you will have to copy & paste the URL to open the link in the browser of your choice.

Use the web version

Most social networks, including Instagram and Facebook, offer a decent mobile-web version, offering a similar feature set. You can use https://instagram.com without issues in iOS Safari.

How to protect yourself as a website provider?

Until Instagram resolves this issue (if ever), you can quite easily trick the Instagram and Facebook app to believe the tracking code is already installed. Just add the following to your HTML code:

<span id="iab-pcm-sdk"></span>

<span id="iab-autofill-sdk"></span>

Additionally, to prevent Instagram from tracking the user’s text selections on your website:

const originalEventListener = document.addEventListener

document.addEventListener = function(a, b) {

if (b.toString().indexOf("messageHandlers.fb_getSelection") > -1) {

return null;

}

return originalEventListener.apply(this, arguments);

}

This will not solve the actual problem of Instagram running JavaScript code against your website, but at least no additional JS scripts will be injected, as well as less data being tracked.

It’s also easy for an app to detect if the current browser is the Instagram/Facebook app by checking the user agent, however I couldn’t find a good way to pop out of the in-app browser automatically to open Safari instead. If you know a solution, I’d love to know.

Update on 2022-08-11: As response to this article, Adrian published a post about this exact topic.

Proposals

For Apple

Apple is doing a fantastic job building their platform with the user’s privacy in mind. One of the 4 privacy principles:

User Transparency and Control: Making sure that users know what data is shared and how it is used, and that they can exercise control over it.

– Apple Privacy PDF (April 2021)

At the moment of writing, there is no AppStore Review Rule that prohibits companies from building their own in-app browser to track the user, read their inputs, and inject additional ads to third party websites. However Apple is clearly recommending that to use SFSafariViewController:

Avoid using a web view to build a web browser. Using a web view to let people briefly access a website without leaving the context of your app is fine, but Safari is the primary way people browse the web. Attempting to replicate the functionality of Safari in your app is unnecessary and discouraged.

– Apple Human Interface Guidelines (June 2022)

If your app lets users view websites from anywhere on the Internet, use the

SFSafariViewControllerclass. If your app customizes, interacts with, or controls the display of web content, use theWKWebViewclass.

– Apple SFSafariViewController docs (June 2022)

Introducing App-Bound Domains

App-Bound Domains is an excellent new WebKit feature making it possible for developers to offer a safer in-app browsing experience when using WKWebView. As an app developer, you can define which domains your app can access, and all web requests will be restricted to them. To disable the protection, a user would have to explicitly disable it in the iOS settings app.

App-Bound Domains went live with iOS 14 (~1.5 years ago), however it’s only an opt-in option for developers, meaning the vast majority of iOS apps don’t make use of this feature.

If the developers of SocialApp want a better user privacy experience they have two paths forward:

- Use

SafariViewControllerinstead ofWKWebViewfor in-app browsing.SafariViewControllerprotects user data from SocialApp by loading pages outside of SocialApp’s process space. SocialApp can guarantee it is giving its users the best available user privacy experience while using SafariViewController.- Opt-in to App-Bound Domains. The additional

WKWebViewrestrictions from App-Bound Domains ensure that SocialApp is not able to track users using the APIs outlined above.

I highlighted the "want a better user privacy experience" part, as this is the missing piece: App-Bound Domains should be a requirement for all iOS apps, since the social media apps are the ones injecting the tracking code.

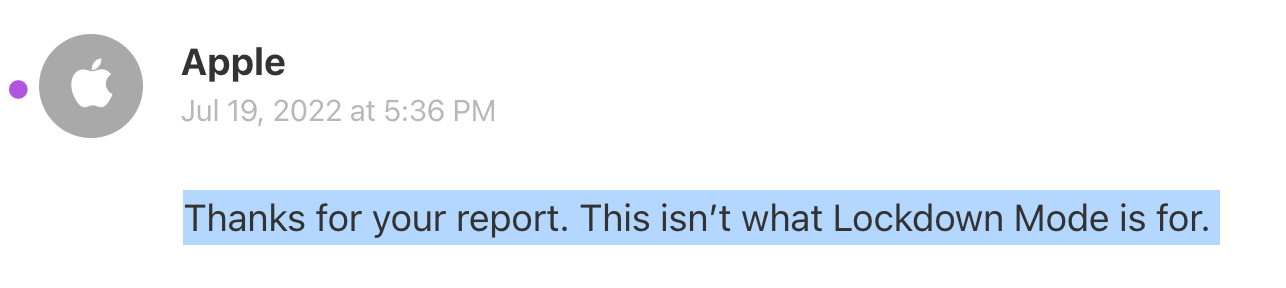

In July 2022 Apple introduced the Lockdown Mode to better protect people who are at high risk. Unfortunately the iOS Lockdown Mode doesn’t change the way in-app web views work. I have filed a radar with Apple: rdar://10735684, for which Apple has responded with “This isn’t what Lockdown Mode is for”

A few immediate steps for Apple to take:

Update the App Review Rules to require the use of SFSafariViewController or App-Bound Domains when displaying any third party websites.

- There should be only a few exception (e.g. browser apps), that require two extra steps:

- Request an extra entitlement to ensure it’s a valid use-case

- Have the user confirm the extra permission

- First-party websites/content can still be displayed using the

WKWebViewclass, as they are often used for UI elements, or the app actually modifying their first party content (e.g. auto-dismissing of their own cookie banners)

I’ve also submitted a radar (rdar://38109139) to Apple as part of my past blog post.

For Meta

Do what Meta is already doing with WhatsApp: Stop modifying third party websites, and use Safari or SFSafariViewController for all third party websites. It’s what’s best for the user, and the right thing to do.

I’ve disclosed this issue with Meta through their Bug Bounty Program, where within a few hours they confirmed they were able to reproduce the “issue”, however I haven’t heard back anything else within the last 9 weeks, besides asking me to wait longer until they have a full report. Since there hasn’t been any responses on my follow-up questions, nor did they stop injecting tracking code into external websites, I’ve decided to go public with this information (after giving them another 2 weeks heads-up)

Update 2022-08-11 (information provided by Meta)

After the publication went live, Meta has sent two emails clarifying what is happening on their end. I addressed their comments, the following has changed:

- The script that gets injected isn’t the Meta Pixel, but it’s the pcm.js script, which, according to Meta, helps aggregate events, i.e. online purchase, before those events are used for targeted advertising and measurement for the Facebook platform

- According to Meta, the script injected (pcm.js) helps Meta respect the user’s ATT opt out choice, which is only relevant if the rendered website has the Meta Pixel installed. However as far as my understanding goes, all of this wouldn’t be necessary if Instagram were to open the phone’s default browser, instead of building & using the custom in-app browser.

I sent Meta a few follow-up questions - once I hear back, I’ll update the post accordingly, and announce the changes on Twitter.

In the mean-time, everything published in this post is correct: the Instagram app is executing and injecting JavaScript code into third party websites, rendered inside their in-app browser, which exposes a big risk for the user. Also, there is no way to opt-out of the custom in-app browser.

As Meta was providing me with more context and details, I have updated the post to reflect this. You can find the full history of the post, and which parts got edited over here.

Update 2022-08-14 (information provided by Meta)

The main question I asked: If Meta built a whole system to inject JavaScript code (pcm.js) into third party websites to respect people’s App Tracking Transparency (ATT) choices, why wouldn’t Instagram just open all external links in the user’s default browser? This would put the user in full control over their privacy settings, and wouldn’t require any engineering effort on Meta’s end.

To that, the answer was:

As shared earlier, pcm.js is required to respect a user’s ATT decision. The script needs to be injected to authenticate the source and the integrity (i.e. if pixel traffic is valid) of the data being received. Authentication would include checking that, when data is received from the In App Browser through the WebView-iOS native bridge, it contains a valid nonce coming from the injected script. SFSafariViewController doesn’t support this. There are additional components within the In App Browser that provide security and user features that SFSafariViewController also doesn’t support.

While that answer provides some context, I don’t think it answers my question. Other apps, including Meta’s own WhatsApp, can operate perfectly fine without using a custom in-app browser.

My ticket with Meta got marked as resolved "given the items raised in your submission are intentional and not a privacy concern".

My second question was about the tracking of the user’s text selection, and according to Meta, this is some old code that isn’t used anymore:

In older versions of iOS, this code was necessary to allow users to share selected text to their news feed. As newer versions of iOS have built-in functionality for text selection, this feature has been deprecated for some time and was already identified for removal as part of our standard code maintenance. There is no code in our In App Browser that shares text selection information from websites without the user taking action to share it themselves via a feature (like quote share).

Check out my other privacy and security related publications.

Tags: ios, privacy, hijacking, instagram, meta, facebook, sniffing | Edit on GitHub