Creating perfect App Store Screenshots of your iOS App

Your App Store screenshots are probably the most important thing when it comes to convincing potential users to download or purchase your app. Unfortunately, many apps don’t do screenshots right.

A quick overview over existing methods to generate screenshots:

Manually create screenshots on all devices and all languages

It comes without saying that this takes too much time, which also decreases the quality of the screenshots. Since it is not automated, the screenshots will show slightly different content on the various devices and languages. Many companies end up creating screenshots only in one language and use it for all languages. While this might seem okay to us developers, there are many potential users out there, that cannot read the text on your app screenshots if they are not localised. Have you ever looked at a screenshots with content in a language you don’t know? It won’t convince you to download the app.

Biggest disadvantage of this method: If you notice a spelling mistake in the screenshots, you release an update with a new design or you just want to show more up to date content, you’ll have to create new screenshots for all languages and devices… manually.

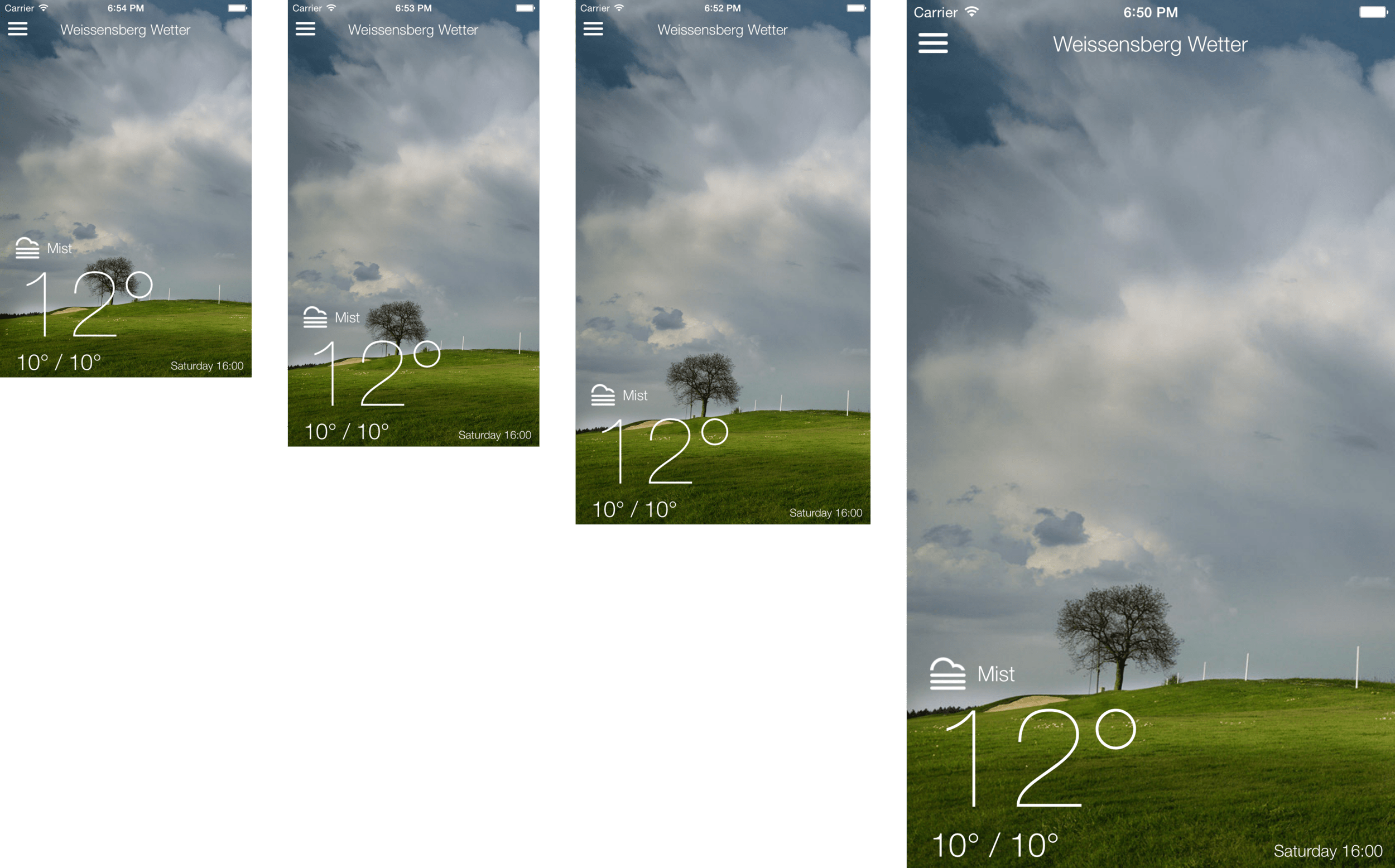

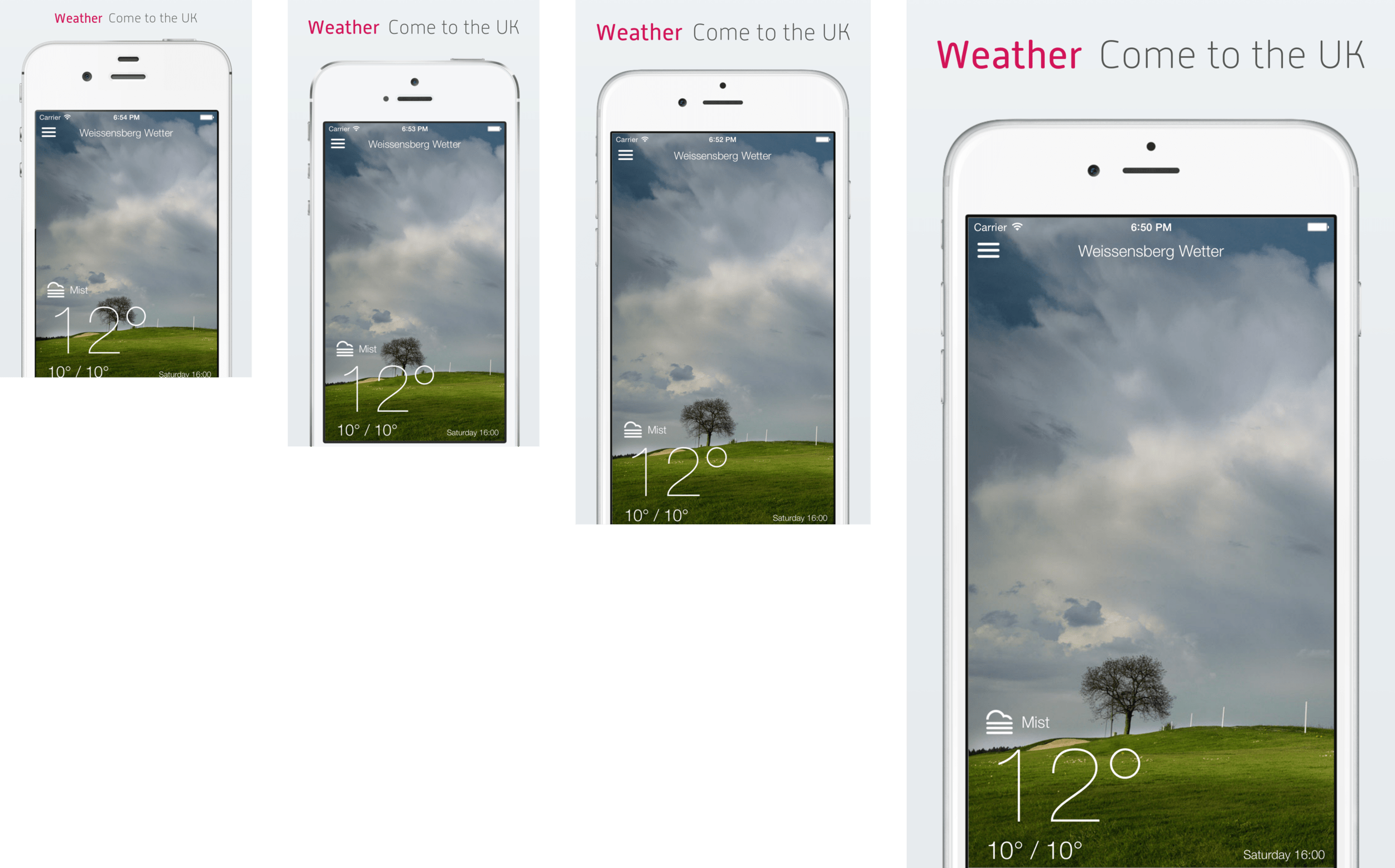

Notice how the size of the font of the first 3 screenshots (iPhone 4, iPhone 5, iPhone 6) are exactly the same. Only the last screenshot (iPhone 6 Plus) the text seems larger as it’s a @3x display.

Create screenshots on one device type, put it into frames and resize them

This way, you only create 5 screenshots per language on only one device type and put them into frames. By putting the one screenshot into different frames, the tool you use can resize the resulting image to match the iTunes Connect requirements.

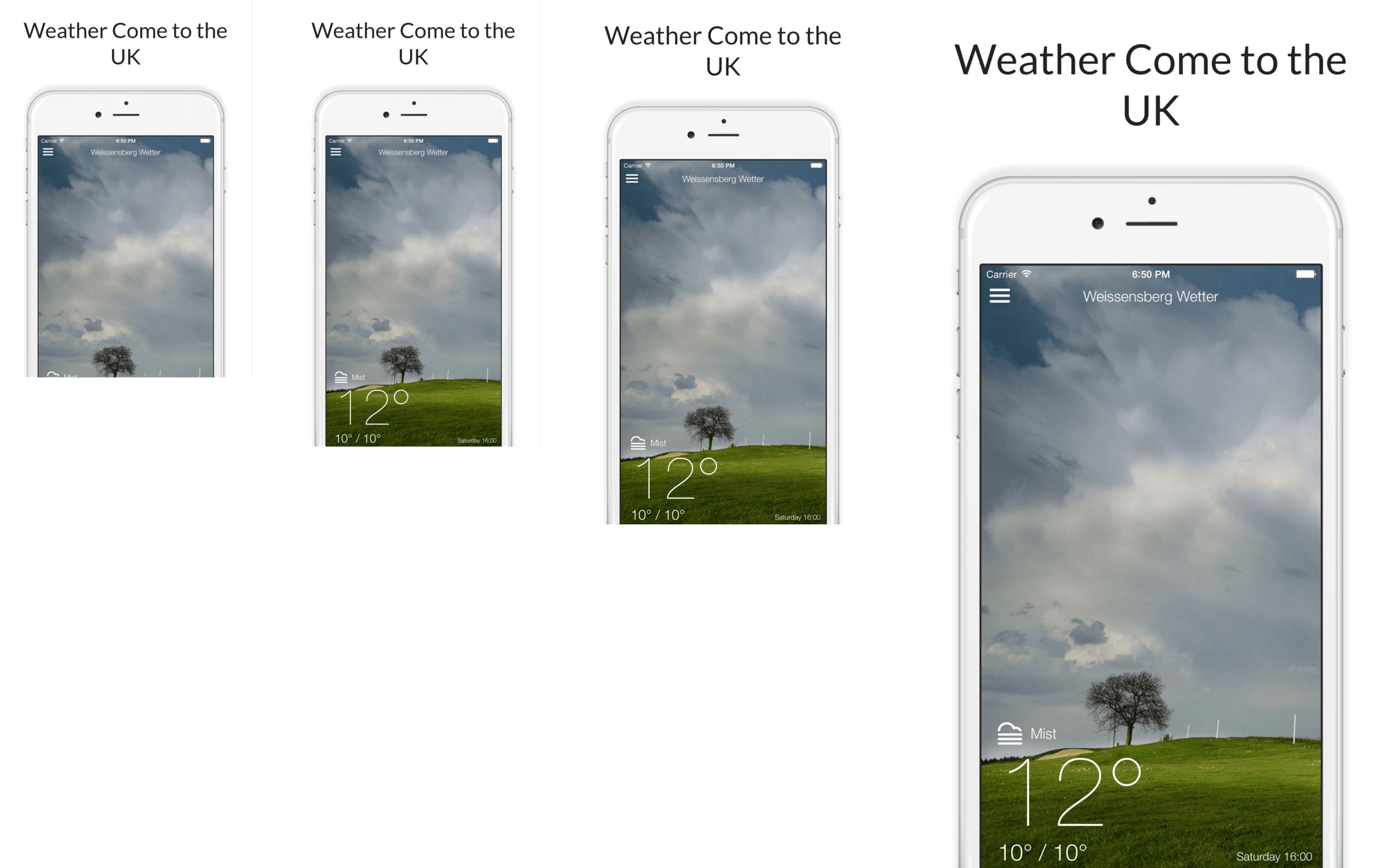

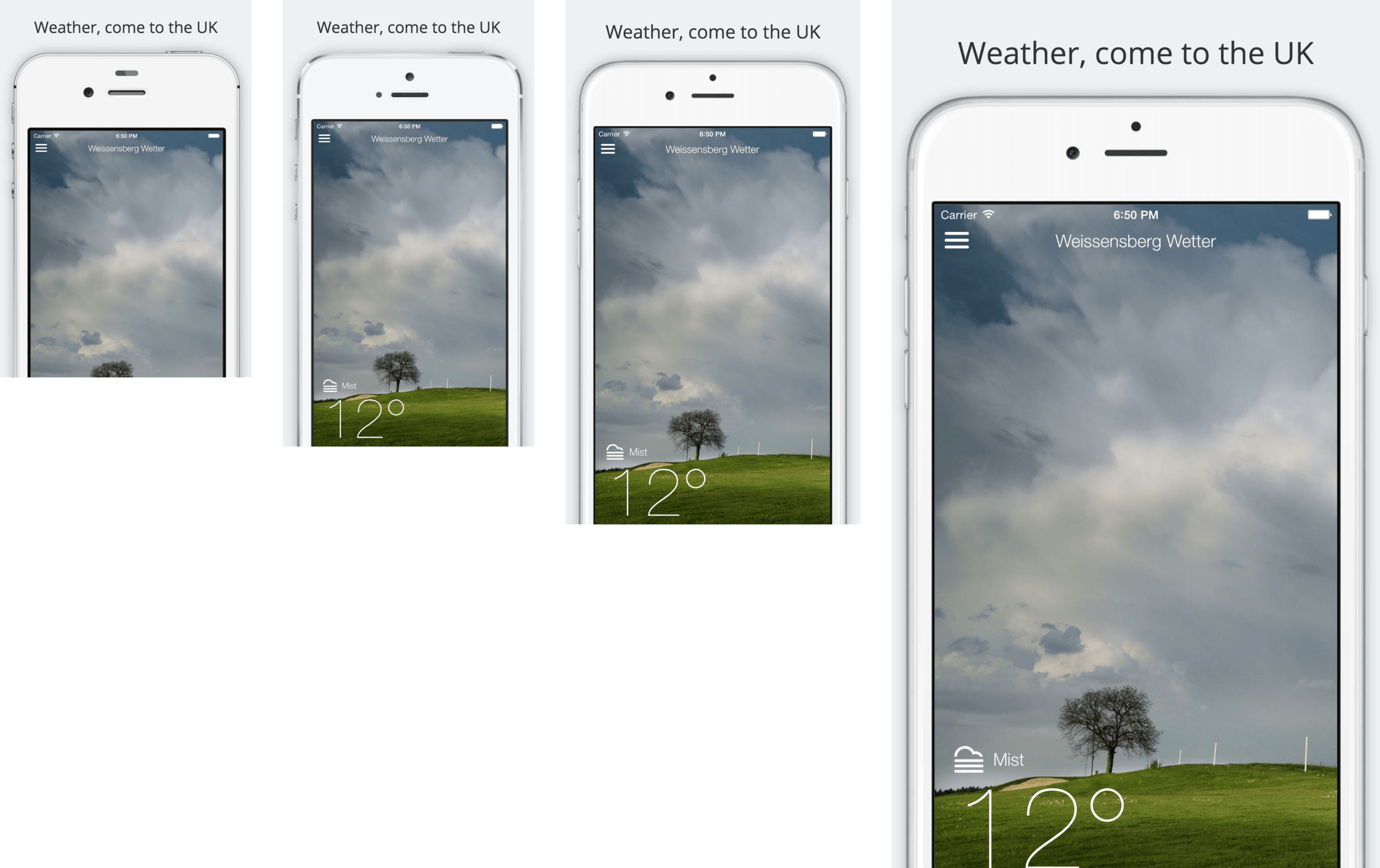

Below some example applications that use this technique. I only had to upload one screenshot and got the result shown below. (left: iPhone 4, right: iPhone 6 Plus)

Do you see the difference between the font sizes in the screenshots? Carrier is easily readable on the iPhone 6 Plus and maybe the iPhone 6, but not on the other devices. Another problem with this service is the wrong device types: The iPhone 6 should not look the same as the other devices.

A different example which now uses the correct device frame for each screen size. Do you see how very small the font on the iPhone 4 is? All 4 frames use the exact same screenshot. On smaller devices this results in very small fonts which are difficult to read for the end-user. On larger devices the screenshot is scaled up, which causes blurry images and fonts.

Don’t get me wrong, using a web service that does these kind of frames for you is a great and easy way to get beautiful screenshots for the App Store. It’s also the best solution if you don’t want to invest more time automating taking better screenshots.

Update August 2016: Use just one set of screenshots

With the iTunes Connect screenshot update on August 8th, 2016, you can now use one set of screenshots for all available devices and screenshots. iTunes Connect will automatically scale the images for you, so that each device renders the same exact image.

While this is convenient, this approach has the same problems as the device frame approach: The screenshots don’t actually show how the app looks on the user’s device. It’s a valid way to start though, since you can gradually overwrite screenshots for specific languages and devices.

To sum up, the problems with existing techniques:

- Wrongly scaled screenshots resulting in blurry font

- Not using the correct device frames for the various screen sizes

- Screenshot doesn’t show the screen the user will actually see (iPhone 6 Plus user interface should look differently than iPhone 4)

- Most screenshot builder don’t have landscape support

- No Mac App Support

Using correct screenshots for all device types and languages (“The Right Way”)

Checklist for really great screenshots:

- Screenshots localised in all languages your app supports

- Different screenshots for different device types to have the correct font in your screenshots

- Same content in all languages and device types (means same screens visible with the same items)

- No loading indicators should be visible, not even in the status bar

- No scrolling indicators should be visible

- A clean status bar: Full battery, full Wifi and of course 9:41

- Localised titles above your screenshots

- Device in screenshots actually matches the device of the user (except for the color)

- A nice looking background behind the frames

- Optionally a coloured title around the device frame

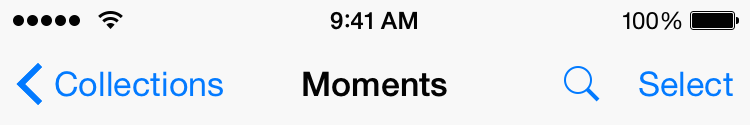

Clean Statusbar

Notice the following things:

- 9:41 AM (or just 9:41)

- All 5 dots (formerly known as bars)

- Full WiFi Signal

- Full battery

To achieve such a nice looking status bar, I can really recommend SimulatorStatusMagic by Dave Verwer. It’s very easy to setup and you get all the above mentioned points for free.

You can also use the Quick Time recording feature, by connecting your real device via USB. This works nicely, however it requires you to own a physical device for each screen size.

Nothing is perfect

I worked on screenshot automation for a really long time, but haven’t found the ultimate solution (yet). Even with tools like snapshot and frameit there are some open issues.

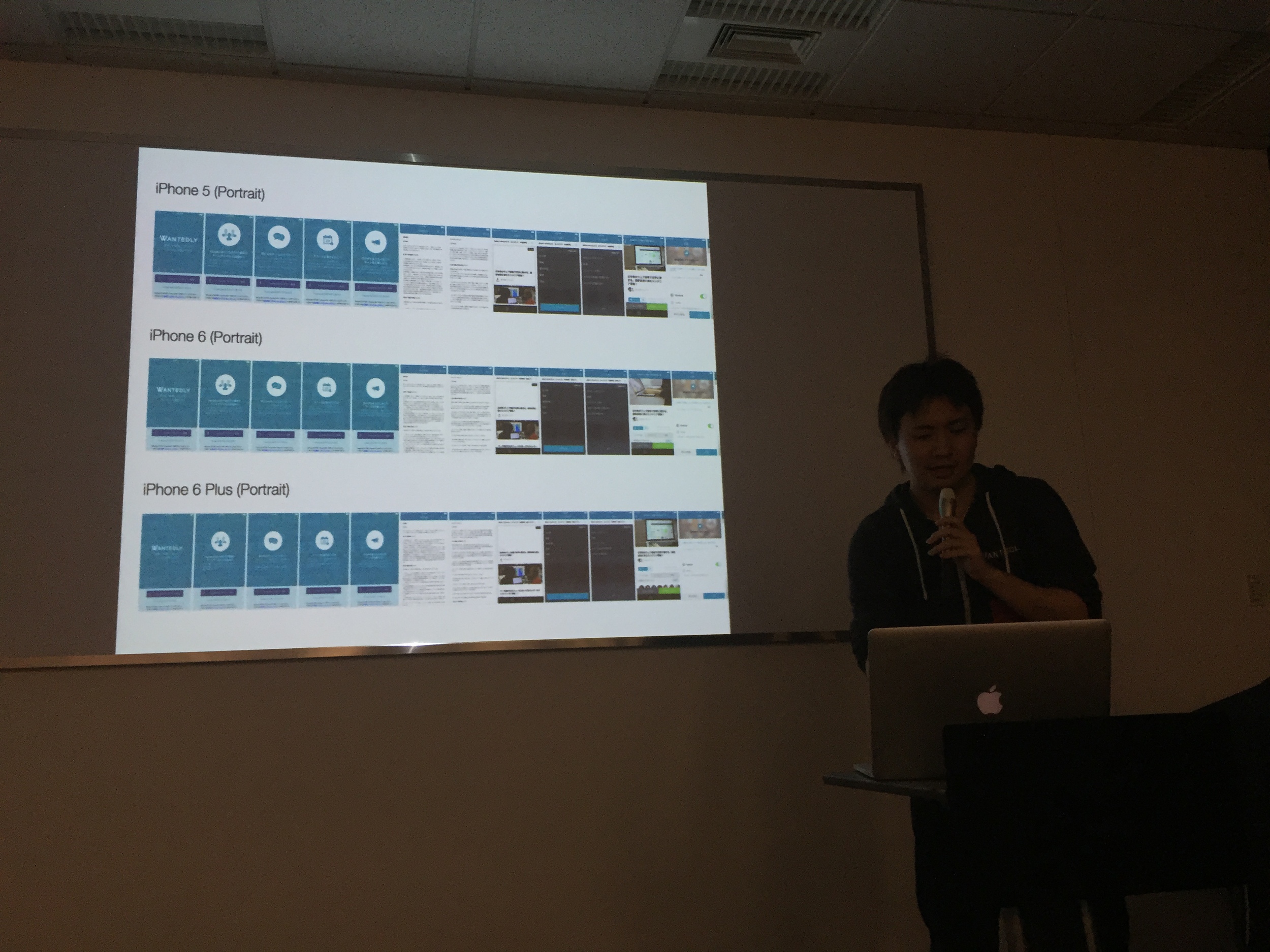

Okay, back to what you can actually do today :) Below are the results of nice screenshots, which were all generated completely automatically.

What’s wrong with those screenshots? The time isn’t 9:41.

On the iPhone 4, iPhone 5 and iPhone 6 the font size is exactly the same. The iPhone 6 Plus, again, has a @3x display which is why the text appears larger.

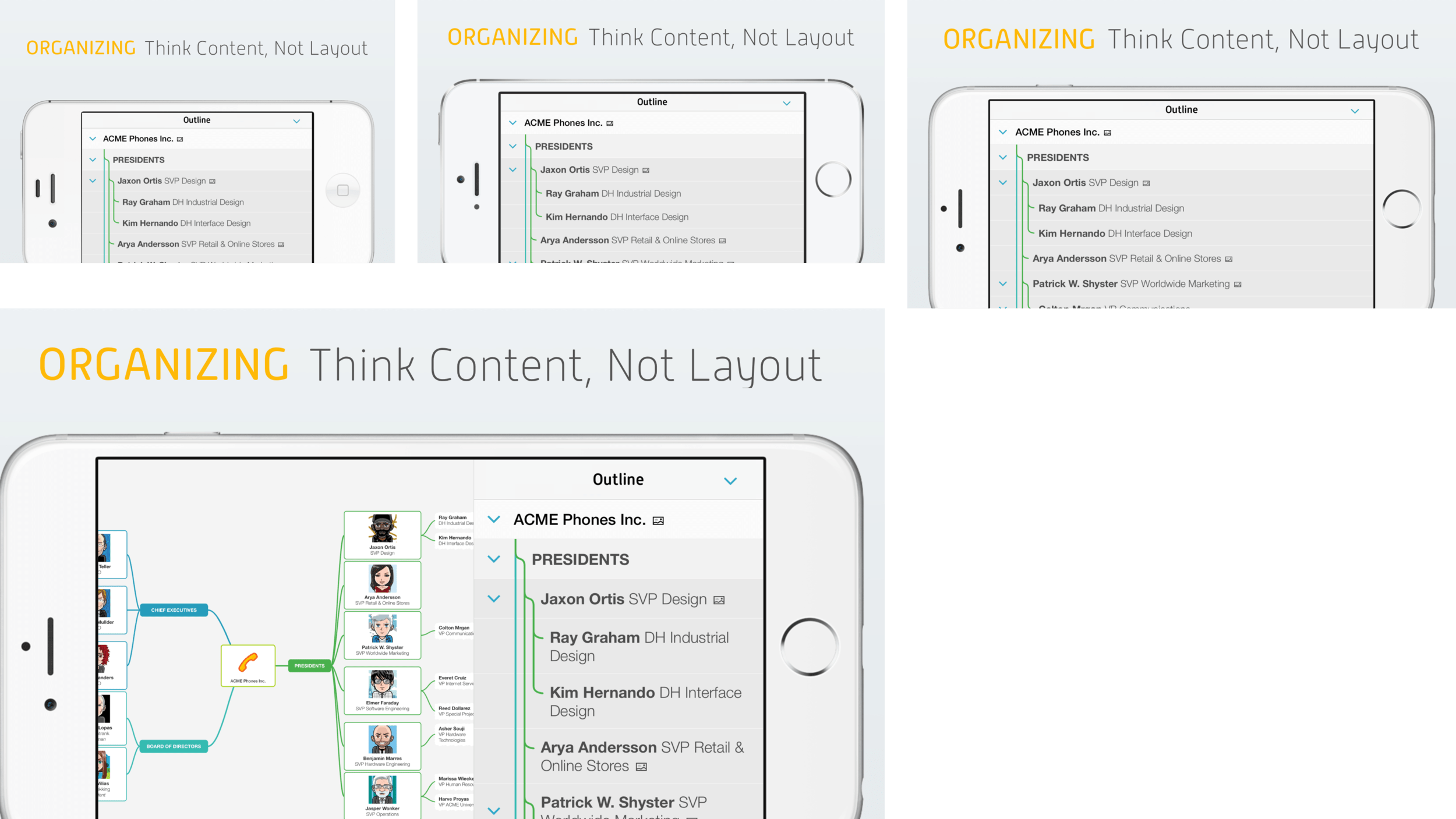

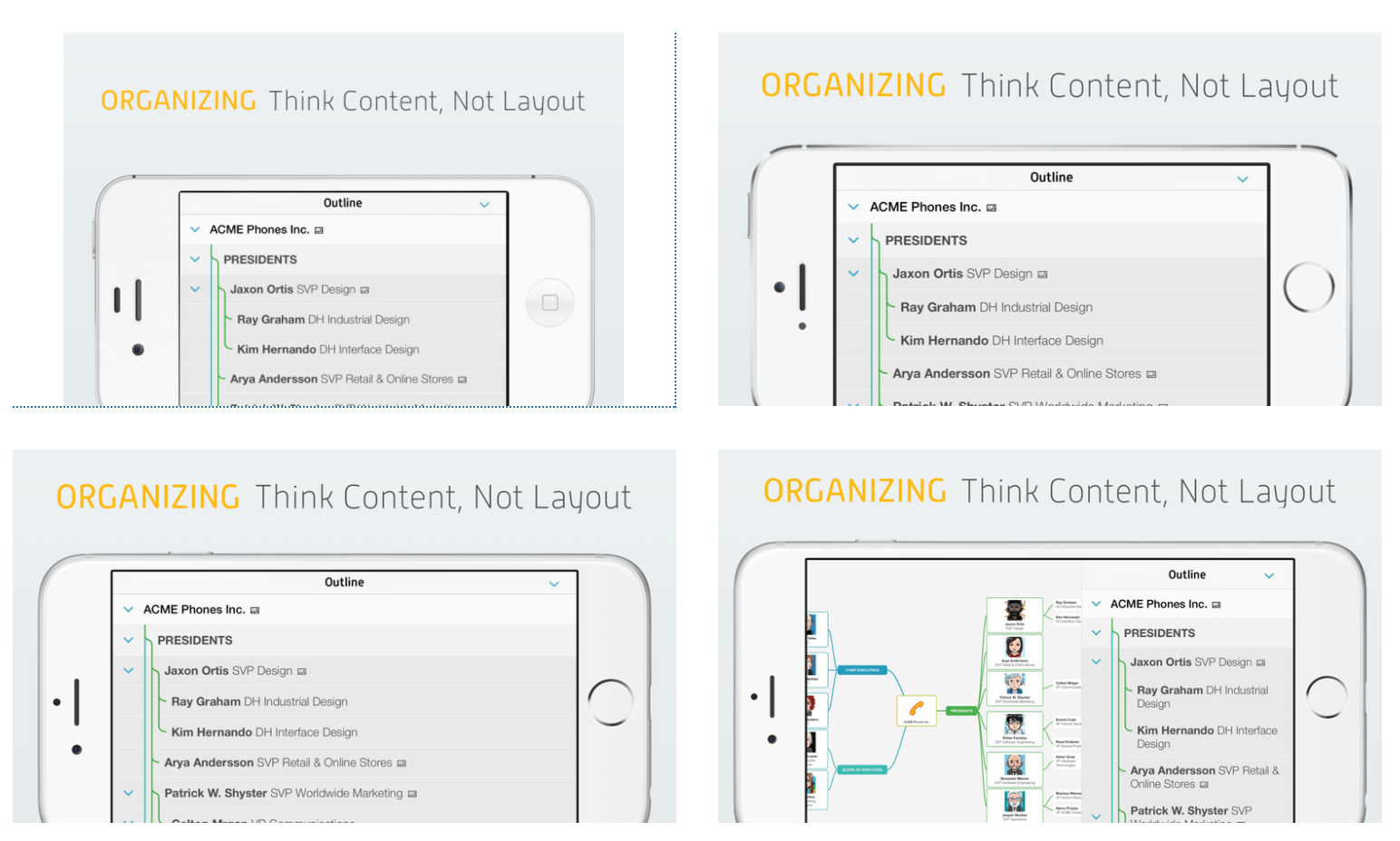

How does this look like for landscape screenshots?

Since the above screenshot collection looks a bit messy I decided to automatically resize the screenshots in the following examples. Instead of leaving all screenshots 1:1 they now appear properly aligned next to each other.

Landscape screenshots of MindNode: The iPhone 6 Plus shows a split screen when the app is in landscape mode. The smaller screen sizes show the list only. The users can see how the app looks like on their device before even installing the app.

Another interesting detail: Take a look at the lock button on the different devices, on the 2 screenshots on the top the lock button is on the top of the iPhone, while the lock button is on the right side on the latest generation.

The screenshots don’t have a status bar, since MindNode doesn’t show it in landscape mode.

Special thanks to Harald Eckmüller for designing the MindNode screenshots.

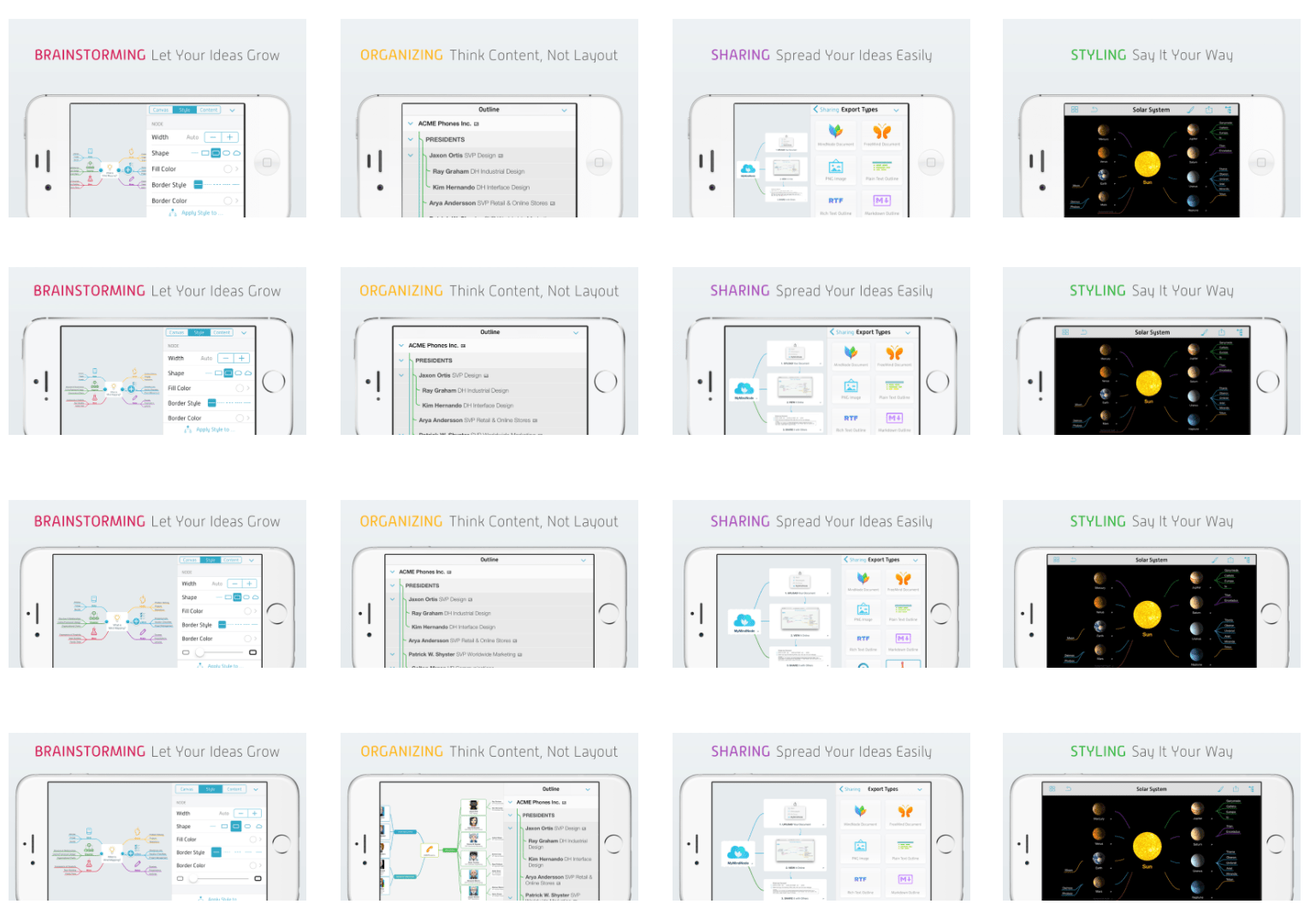

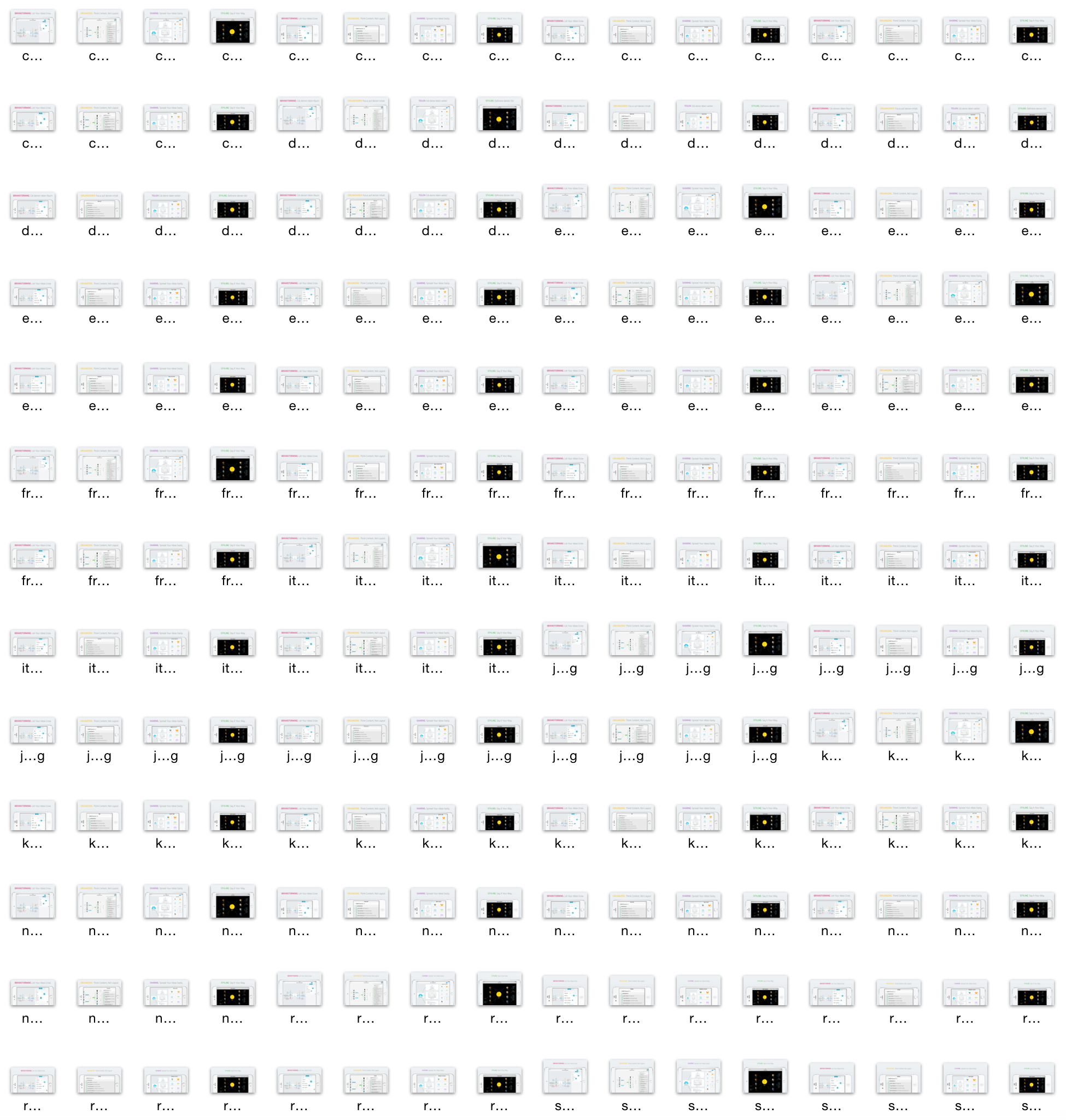

How does this look like for multiple screenshots?

How does this look like when you support multiple languages?

Generating this amount of screenshots takes hours, even when it is completely automated. The nice thing: You can do something else on your Mac while the screenshots are generated, as long as you don’t need the simulator. Instead of working while the screenshots are generated, you can also take a nap or tweet about fastlane.

How does this magic work?

All MindNode screenshots shown above are created completely automatically using 2 steps:

Creating the Screenshots

Using snapshot you can take localized screenshots on all device types completely automatic. All you have to do is provide a JavaScript UI Automation file to tell snapshot how to navigate in your app and where to take the screenshots. More information can be found on the project page. This project will soon be updated to use UI Tests instead of UI Automation to write screenshot code in Swift or Objective C instead.

This step will create the raw screenshots for all devices in all languages. At this point you could already upload the screenshots to iTunes Connect, but this wouldn’t be so much fun.

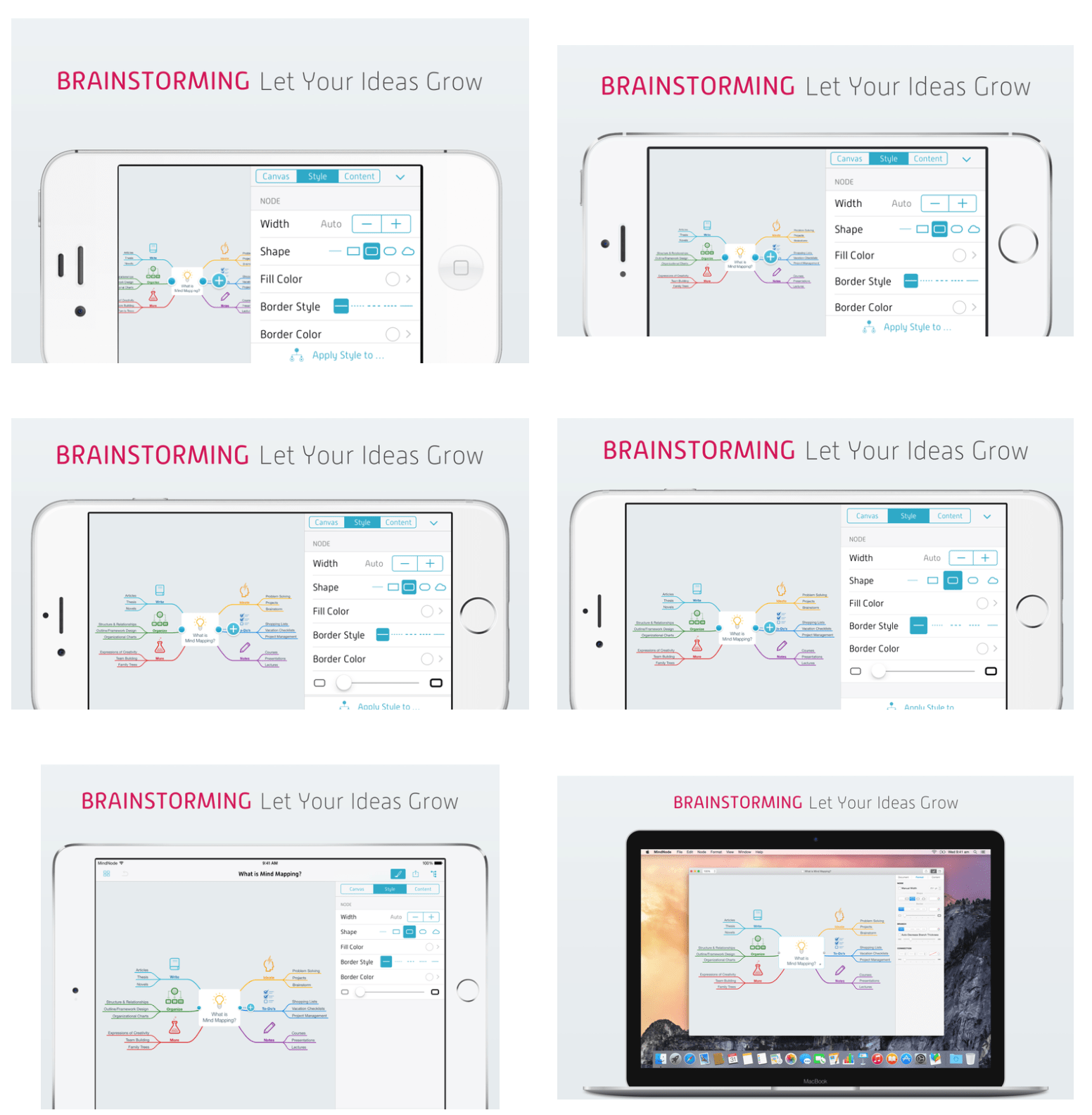

Adding the device frame, background and title

frameit was originally designed to just add device frames around the screenshots. With frameit 2.0 you can now add device frames, a custom background and a title to your screenshots.

- Custom backgrounds

- Use a keyword + title to make the screen look more colorful

- Use your own fonts

- Customise the text colors

- Support for both portrait and landscape screenshots

- Support for iPhone, iPad and Mac screenshots

- Use .strings files to provide translated titles

The same screenshot on the iPhone, iPad and Mac.

Take a closer look at the screenshots above: The iPad’s time is 9:41 and the carrier name is MindNode. The other screenshots don’t have a status bar, as MindNode doesn’t show it on the iPhone in landscape mode.

A timelapse video of snapshot creating the MindNode screenshots.

The generated HTML Summary to quickly get an overview of your app in all languages on all device types.

How can I get started?

To make things easier for you, I prepared an open source setup showing you how to use frameit to generate these nice screenshots, available on GitHub.

The interesting parts are:

- Framefile.json: Contains information about the font family, font color and background image

- Each language folder: Contains a keyword.strings and title.strings, containing the localised text for the title

All you have to do now is run frameit white, to frame all the screenshots generated by snapshot.

Putting things together

Calling snapshot and frameit after each after is far too much work, let’s automate this.

Take a look at the fastlane configuration of MindNode: Fastfile

lane :screenshots do

snapshot

frameit(white: true)

deliver

end

To generate new screenshots, frame them and upload them to iTunes Connect you only have to run

fastlane ios screenshots

More Information

snapshot, frameit and deliver are part of fastlane.

Special thanks to MindNode for sponsoring frameit 2.0 and providing the screenshots for this blog post.

Tags: screenshots, snapshot, frameit, automation | Edit on GitHub

fastlane iOS Meetups in Tokyo

In June I was on a trip with friends visiting various cities in Asia. The last stop being Tokyo. As I know from GitHub, some fastlane users are from Tokyo, so I decided to tweet about me being in Tokyo.

There was a 'Not Found' error fetching URL: 'https://twitter.com/modocache/status/612823396536217600'

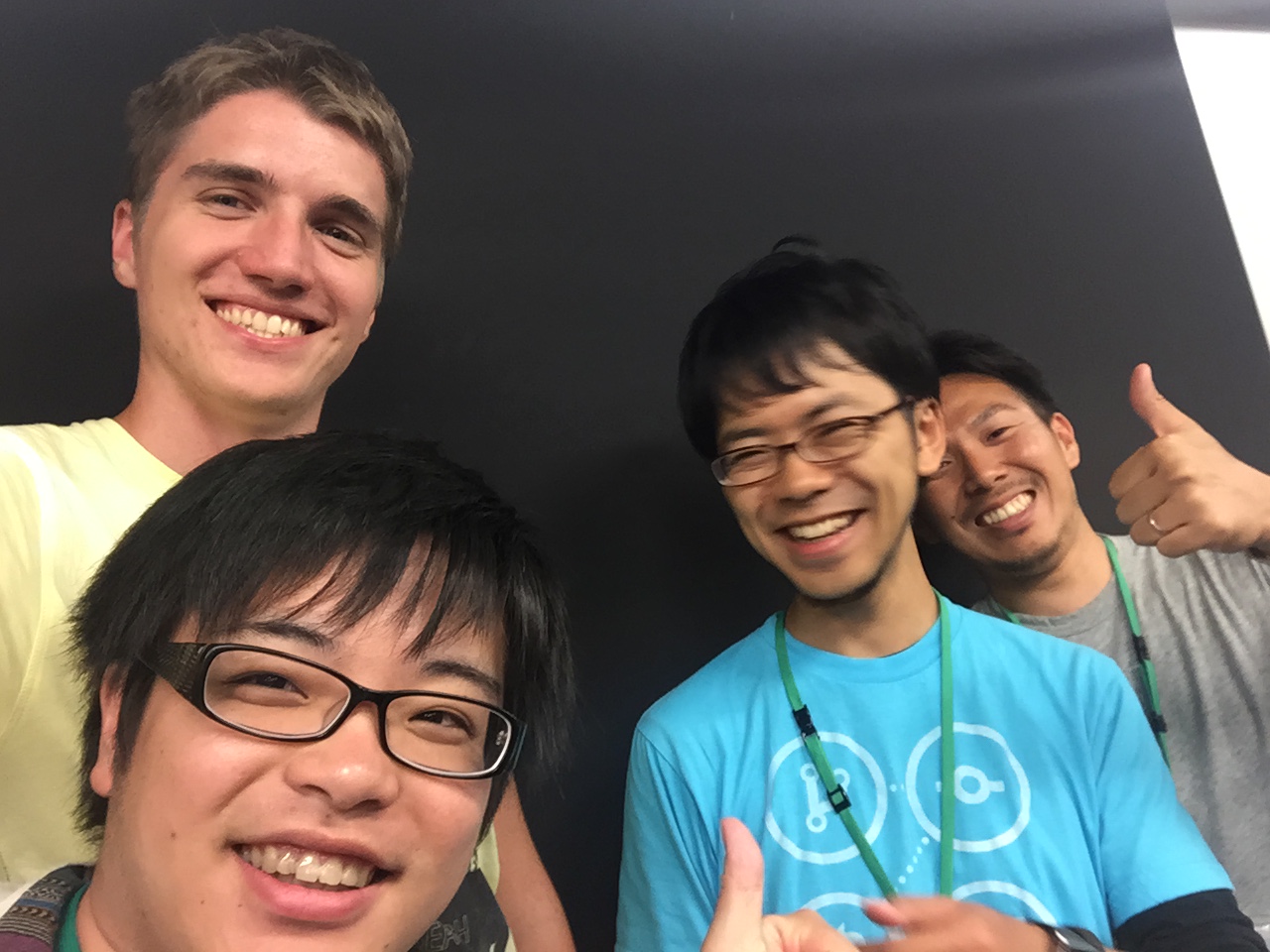

Fortunately, Brian saw my tweet and was kind enough to introduce me to k_katsumi. Kishikawa Katsumi organised 2 fastlane meet-ups, additionally to the Realm meet-up in Tokyo in just one week.

I was in Tokyo for only a week. On Tuesday we had a fastlane dinner meet-up with 13 iOS developers from Tokyo.

fastlane Dinner on Tuesday

fastlane Dinner on Tuesday

DeployGate

One of fastlane’s built-in integrations is DeployGate, a beta testing service, developed here in Tokyo. After the founder Yuki Fujisaki heard I’m in Tokyo, we met at the DeployGate HQ.

Realm Meetup Tokyo

Just arrived at the local @realm meeting in Tokyo when I noticed I don't understand a word. Ups 😁 pic.twitter.com/KsNLdPL3ah

— Felix Krause (@KrauseFx) June 25, 2015

I was invited to the local Realm meetup. While I couldn’t understand anything, I really enjoyed speaking to the other developers, some of which already use fastlane in their deployment setup.

fastlane presentation

I hold a short presentation about Continuous Delivery and fastlane. As not everyone here speaks English, @kitasuke was so kind to interpret my presentation to Japanese.

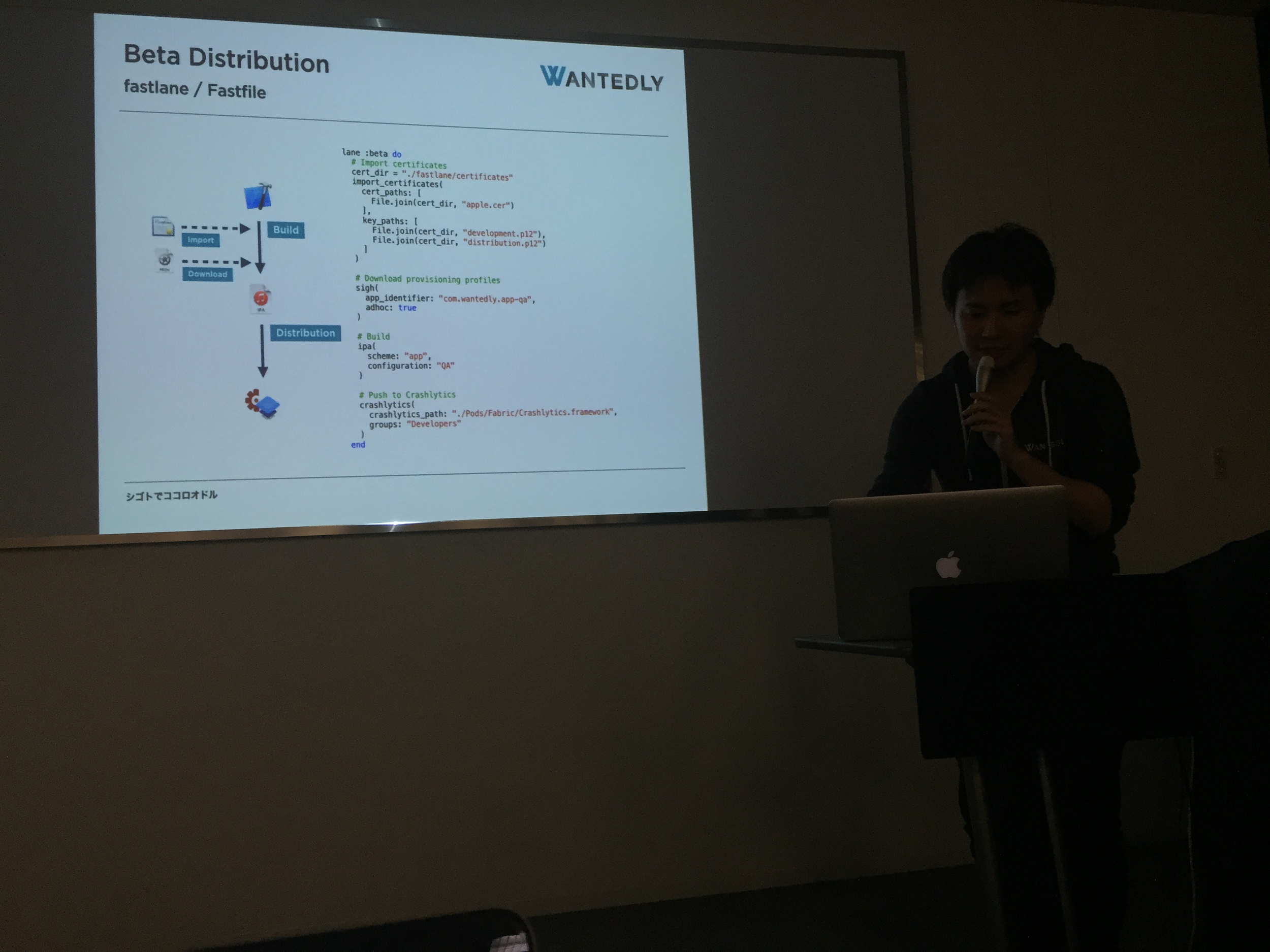

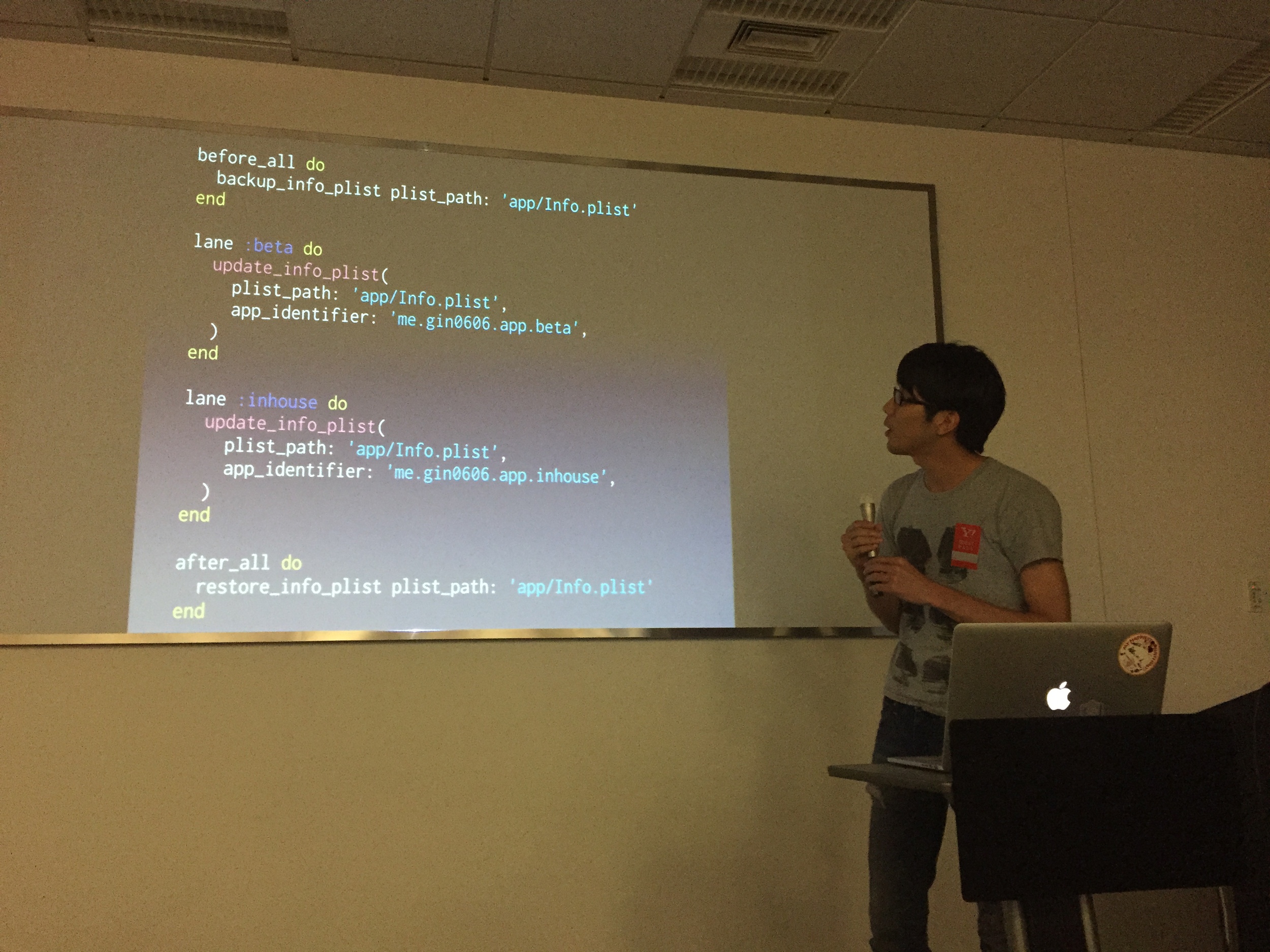

The great thing about this meet-up were the blitz talks after my presentation: Other iOS developers shared their experience with fastlane and how they use it in their company.

And of course, we should never forget taking selfies :D

It’s been amazing to meet developers from the other side of the globe using fastlane.

The iOS developer community here in Tokyo is great and super friendly, I really want to come back again here soon :) Thanks everyone for making this possible.

Run Xcode 7 UI Tests from the command line

Get started with UI Tests to automate User Interface tests in iOS 9

Apple announced a new technology called UI Tests, which allows us to implement User Interface tests using Swift or Objective C code. Up until now the best way to achieve this was to use UI Automation. With UI Tests it’s possible to properly debug issues using Xcode and use Swift or Objective C without having to deal with JavaScript code.

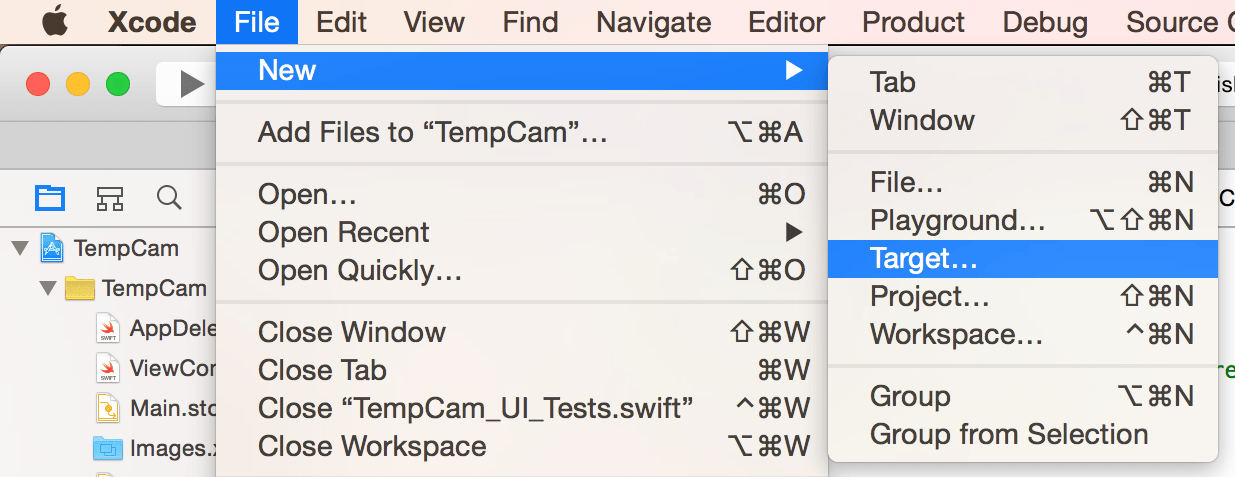

First, you’ll have to create a new target for the UI Tests:

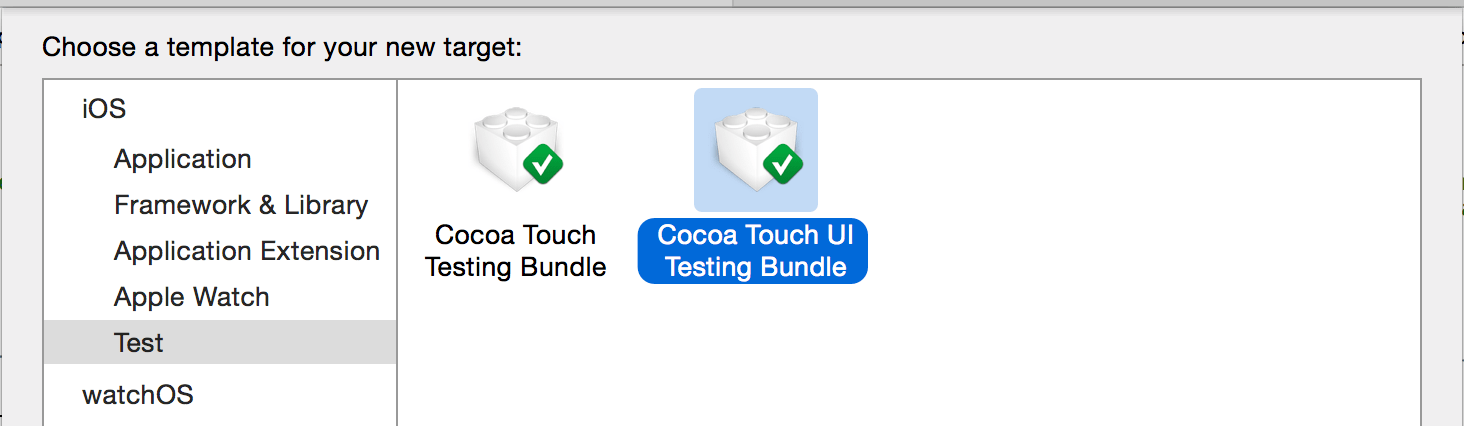

Under the Test section, select the Cocoa Touch UI Testing Bundle:

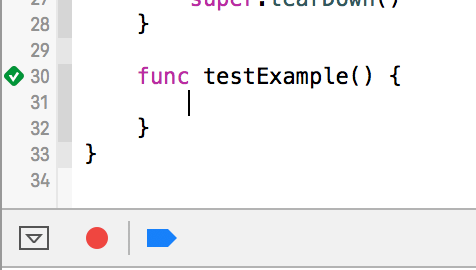

Now open the newly created Project_UI_Tests.swift file in your Project UI Tests folder. On the bottom you have an empty method called testExample. Focus the cursor there and click on the red record button on the bottom.

This will launch your app. You can now tap around and interact with your application. When you’re finished, click the red button again.

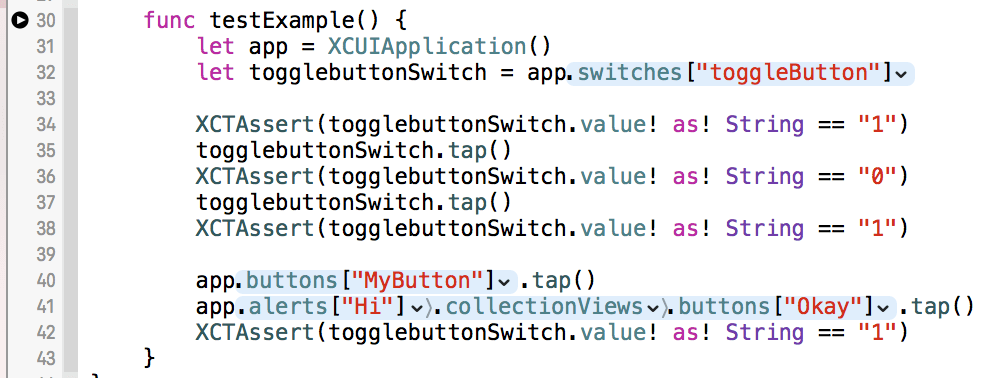

The generated code will look similar to this. I already added some example XCTAsserts between the generated lines. You can now already run the tests in Xcode using CMD+ U. This will run both your unit tests and your UI Tests.

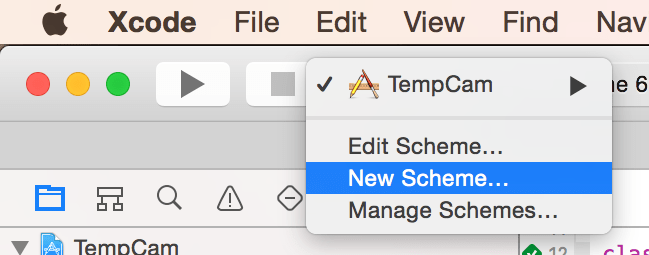

You could now already run the tests using the CLI without any further modification, but we want to have the UI Tests in a separate scheme. Click on your scheme and select New Scheme.

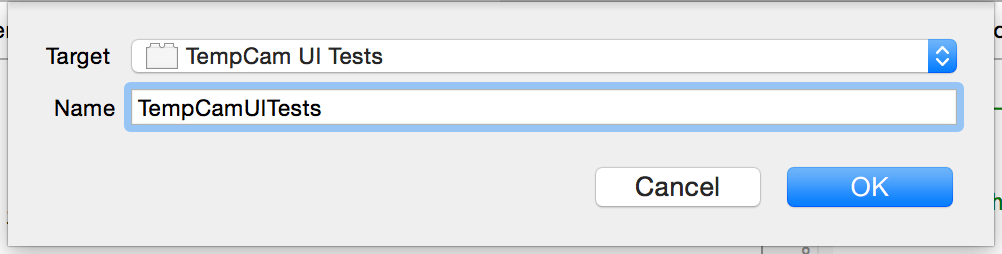

Select the newly created UI Test target and confirm.

If you plan on executing the tests on a CI-server, make sure the newly created scheme has the Shared option enabled. Click on your scheme and choose Manage Schemes to open the dialog.

Launch the tests from the CLI

xcodebuild -workspace App.xcworkspace \

-scheme "SchemeName" \

-destination 'platform=iOS Simulator,name=iPhone 6,OS=9.0'

test

It’s important to also define the version of Xcode to use, in our case that’s the latest beta:

export DEVELOPER_DIR="/Applications/Xcode-beta.app"

You can even make the beta version your new default by running

sudo xcode-select --switch "/Applications/Xcode.app"

Example output when running UI Tests from the terminal:

Test Suite 'All tests' started at 2015-06-18 14:48:42.601

Test Suite 'TempCam UI Tests.xctest' started at 2015-06-18 14:48:42.602

Test Suite 'TempCam_UI_Tests' started at 2015-06-18 14:48:42.602

Test Case '-[TempCam_UI_Tests.TempCam_UI_Tests testExample]' started.

2015-06-18 14:48:43.676 XCTRunner[39404:602329] Continuing to run tests in the background with task ID 1

t = 1.99s Wait for app to idle

t = 2.63s Find the "toggleButton" Switch

t = 2.65s Tap the "toggleButton" Switch

t = 2.65s Wait for app to idle

t = 2.66s Find the "toggleButton" Switch

t = 2.66s Dispatch the event

t = 2.90s Wait for app to idle

t = 3.10s Find the "toggleButton" Switch

t = 3.10s Tap the "toggleButton" Switch

t = 3.10s Wait for app to idle

t = 3.11s Find the "toggleButton" Switch

t = 3.11s Dispatch the event

t = 3.34s Wait for app to idle

t = 3.54s Find the "toggleButton" Switch

t = 3.54s Tap the "MyButton" Button

t = 3.54s Wait for app to idle

t = 3.54s Find the "MyButton" Button

t = 3.55s Dispatch the event

t = 3.77s Wait for app to idle

t = 4.25s Tap the "Okay" Button

t = 4.25s Wait for app to idle

t = 4.26s Find the "Okay" Button

t = 4.28s Dispatch the event

t = 4.51s Wait for app to idle

t = 4.51s Find the "toggleButton" Switch

Test Case '-[TempCam_UI_Tests.TempCam_UI_Tests testExample]' passed (4.526 seconds).

Test Suite 'TempCam_UI_Tests' passed at 2015-06-18 14:48:47.129.

Executed 1 test, with 0 failures (0 unexpected) in 4.526 (4.527) seconds

Test Suite 'TempCam UI Tests.xctest' passed at 2015-06-18 14:48:47.129.

Executed 1 test, with 0 failures (0 unexpected) in 4.526 (4.528) seconds

Test Suite 'All tests' passed at 2015-06-18 14:48:47.130.

Executed 1 test, with 0 failures (0 unexpected) in 4.526 (4.529) seconds

** TEST SUCCEEDED **

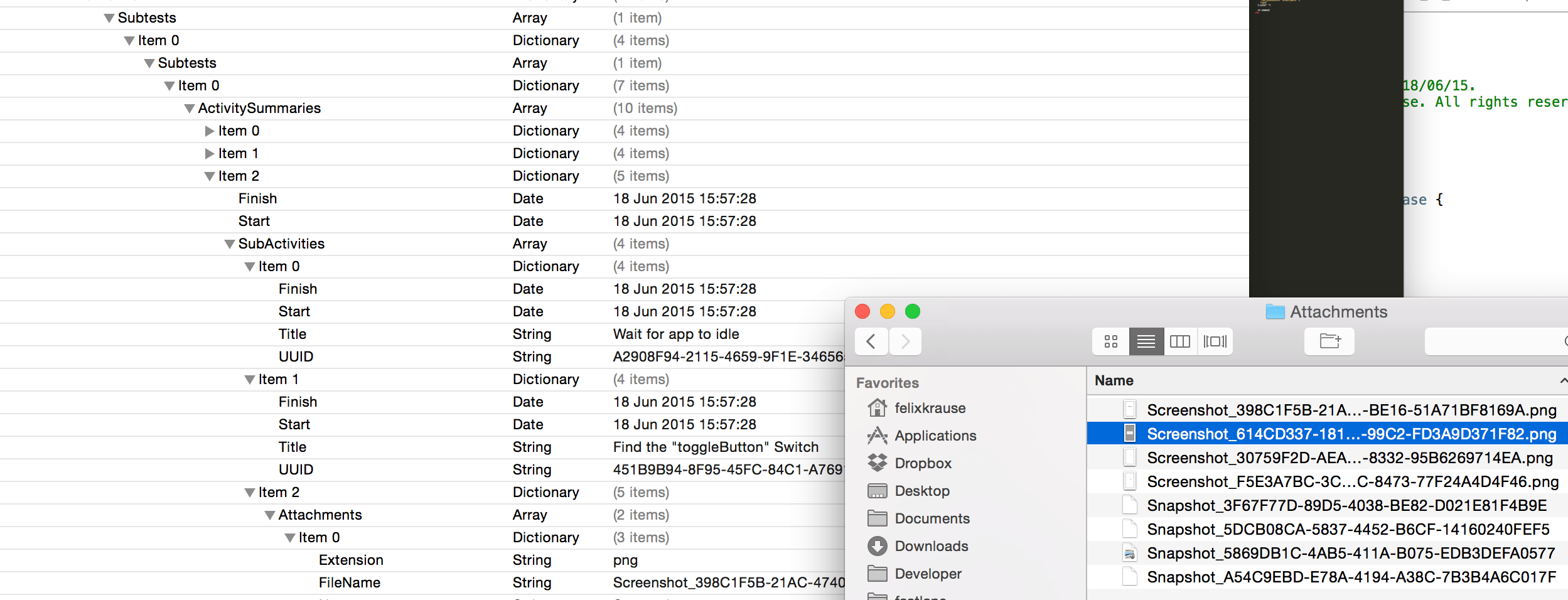

Generating Screenshots

No extra work needed, you get screenshots for free. By appending the derivedDataPath option to your command, you tell Xcode where to store the test results including the generated screenshots.

xcodebuild -workspace App.xcworkspace \

-scheme "SchemeName" \

-destination 'platform=iOS Simulator,name=iPhone 6,OS=9.0' \

-derivedDataPath './output' \

test

Xcode will automatically generate screenshots for each test action. For more information about the generated data, take a look at this writeup by Michele.

Next Steps

To create screenshots in all languages and in all device types, you need a tool like snapshot. snapshot still uses UI Automation, which is now deprecated.

I’m currently working on a new version of snapshot to make use of the new UI Tests features. This enables snapshot to show even more detailed results and error messages if something goes wrong. I’m really excited about this change 👍

Update: snapshot now uses UI Tests to generate screenshots and the HTML summary for all languages and devices.

Tags: UI Tests, User, Interface, Xcode, 7, iOS 9 | Edit on GitHub

spaceship - Launching fastlane into the next generation

Spaceship is a Ruby library that exposes the Apple Developer Center API. It’s super fast, well tested and supports all of the operations you can do via the browser. Scripting your Developer Center workflow has never been easier! Up until now fastlane tools made use of front-end web scraping. This means, the tools used a headless web browser to interact with the Apple Developer Portal and iTunes Connect. While this is an easy solution to get started, I quickly ran into the limits of web scraping.

More and more issues were caused by this technique: The tools were slow and the users got random timeout errors. When using web scraping, the tools would immediately break after front-end design changes of the websites.

Upgrading fastlane

It was time to implement a better solution: By replacing the headless web browser with a plain HTTP client it was possible to speed up sigh by 90% and make it much more stable at the same time.

This allowed us to stub all HTTP requests to write tests and detect newly introduced errors faster.

What is spaceship?

Instead of implementing the HTTP client right into the individual tools, I decided to separate the communication layer and put it into its own reusable Ruby gem. This allows every developer to make use of it.

spaceship is like Core Data for your Dev Center resources

You don’t care about the communication and how it works. You can simply interact with Ruby objects (e.g. App, Certificate, …) and the changes will automatically be pushed to the Dev Center.

How can I get started?

There is a pre-release version of sigh available to try, check out the announcement to upgrade.

If you want to try spaceship directly, check out the spaceship Project page with a very easy to follow documentation on how to use it. You’ll have to install spaceship first before you can start irb (Interactive Ruby Shell).

What’s next?

The plan is to migrate all fastlane tools to make use of spaceship. In the long term, spaceship will also implement the iTunes Connect JSON API (which is already documented on GitHub).

Special thanks to zeropush.com for sponsoring spaceship.

Visit spaceship.airforce for more details.